I want to keep this blog tech focussed and so other than the occasional bit of self-promotion I’ll be posting future Microservice Analytics posts over on its new blog. Hope to see you there!

Month: March 2016

Signalling API Unavailability

When doing upgrades of websites it’s often useful to be able to signal to users that your service is offline for maintenance either in part or in entirety which is quite straightforward to implement unless you’ve got something like an AngularJS or React app, that could well be cached in the browser, and that actually wants to respond to 503 status calls returned from a web based API. Then CORS has a habit of getting in the way.

To help with that I’ve just pushed this super simple and lightweight ASP.Net website to GitHub that will respond with a 503 status code to any request made of it while ensuring that the CORS protocol will succeed meaning that the 503 status code will make its way through to your own error handling.

It’s ideal for hosting in an Azure deployment slot during upgrades that swap slots.

Note: an alternative approach would be to use the URL rewriter in web.config. It’s not particularly intuitive or, to my taste, readable but I believe can be configured to perform the same task.

Accidental Fish Application Support v3.3.0 Release

Last night I published a minor update to this framework to GitHub and NuGet that adds new timer capabilities via the new ITimerFactory interface.

An interval timer is available that runs an action, then pauses for the specified on completion, and runs the task again until cancelled (either by the action itself or a cancellation token). A regular metronomic timer is available that runs an action n seconds irrespective of task duration. Importantly in the latter case if the action takes longer than the duration of the timer to complete it will be cancelled to prevent compounding overlapping action issues (excessive CPU usage, out of memory etc.).

I’ve also added an IBackoffFactory interface that allows the backoff policies to be created with custom backoff timings. The backoff policies continue to be directly injectable with their default timings.

I hope these additions are useful. Feedback is, as ever, welcome.

Microservice Analytics – Update

For those who’ve got in touch asking for a beta code – thank you for your interest, it’s very much appreciated and I’m nearly ready to start issuing them.

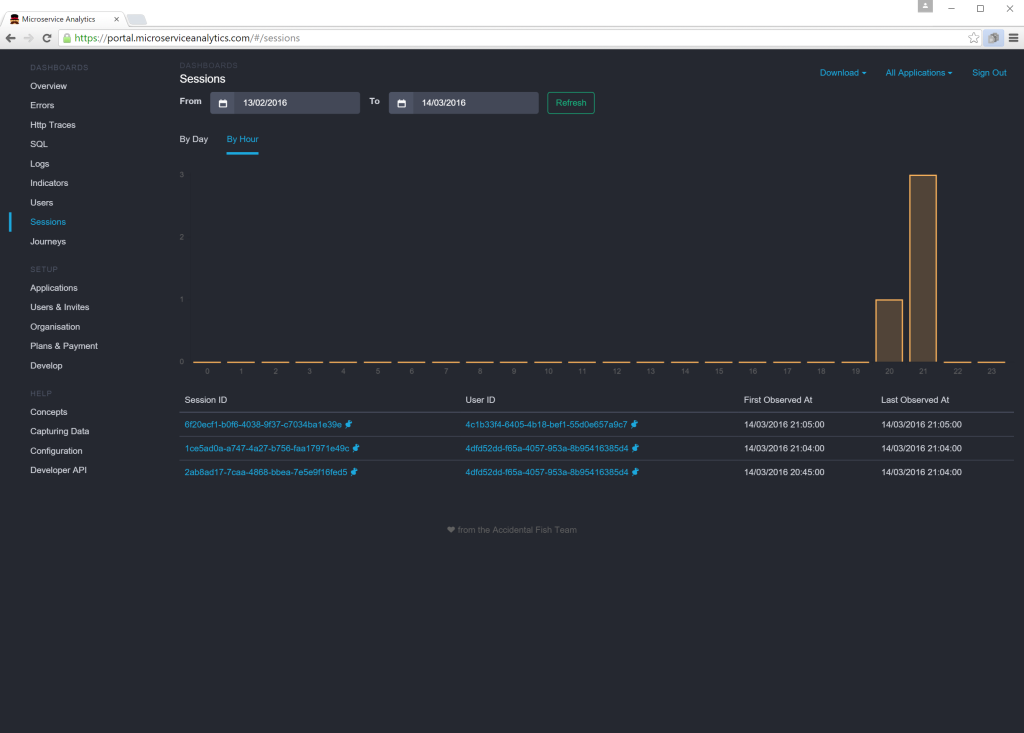

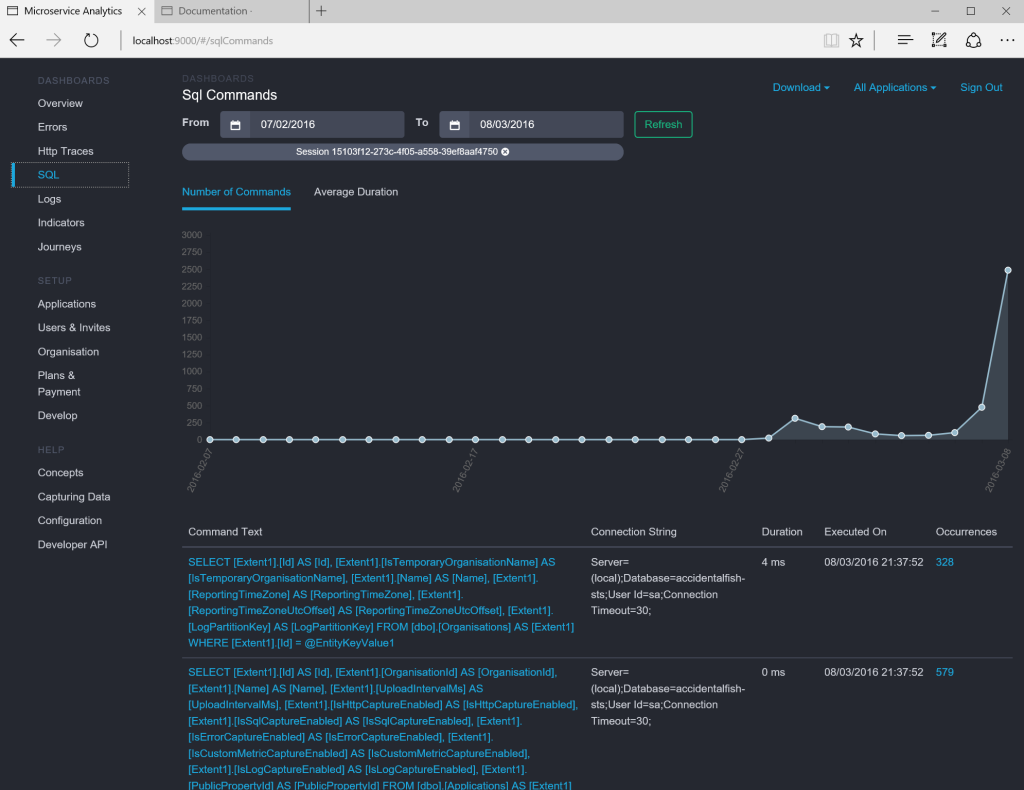

Before releasing them I wanted to make sure I was capturing user and session data properly and that that was getting integrated deep across the other measures and data I capture. Happily this is all working and I’ve just deployed it to my live site.

In this initial release, unless you configure data capture otherwise, then magic numbers will be generated for users and sessions (you can override this to provide your own IDs if you so choose) and these are correlated with everything else that is happening so that it is possible to look at the data from both perspectives:

- What are your users doing and what system activity is that generating in your applications?

- Given an system activity (say a SQL command or Web API call) which user initiated it and/or under which session?

There are some specific views to help with the above but in addition you can tag any user or session and then the whole user interface is filtered by that allowing you to explore quite organically this subset of data.

I’ve attached a couple of screenshots below (taken immediately after deploying the new code to live – so not many users and sessions captured yet!) and as I mentioned earlier I’m nearly ready to share those invite codes, thanks for your patience.

Microservice Analytics – New Analytic / Diagnostic Solution

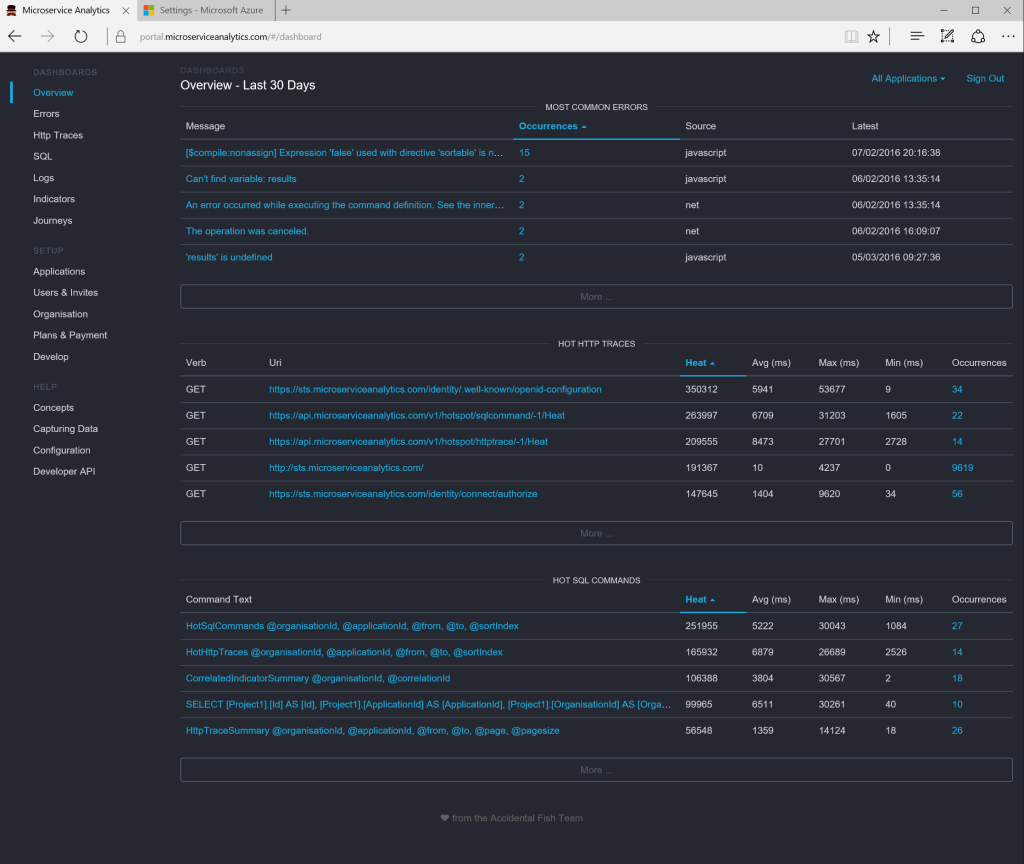

I spend a lot of time working with highly distributed systems that use an SOA / microservice type architecture and increasingly are accessed through clients such as native apps, Cordova app, or JavaScript SPAs and it can be challenging to understand what is going on across multiple servers and devices when faults occur or you need to understand something.

I wanted a tool that was fairly non-invasive, had a highly interactive user interface, and would capture a wide breadth of data and was reasonably priced. There were solutions that did bits of what I wanted (particularly at the high end in terms of price) but nothing that really hit the sweet spot for me particularly in terms of being able to drill through and across data to form a coherent picture.

This sort of thing really is my cup of tea as a developer and so I went off and built it coming at this from two directions:

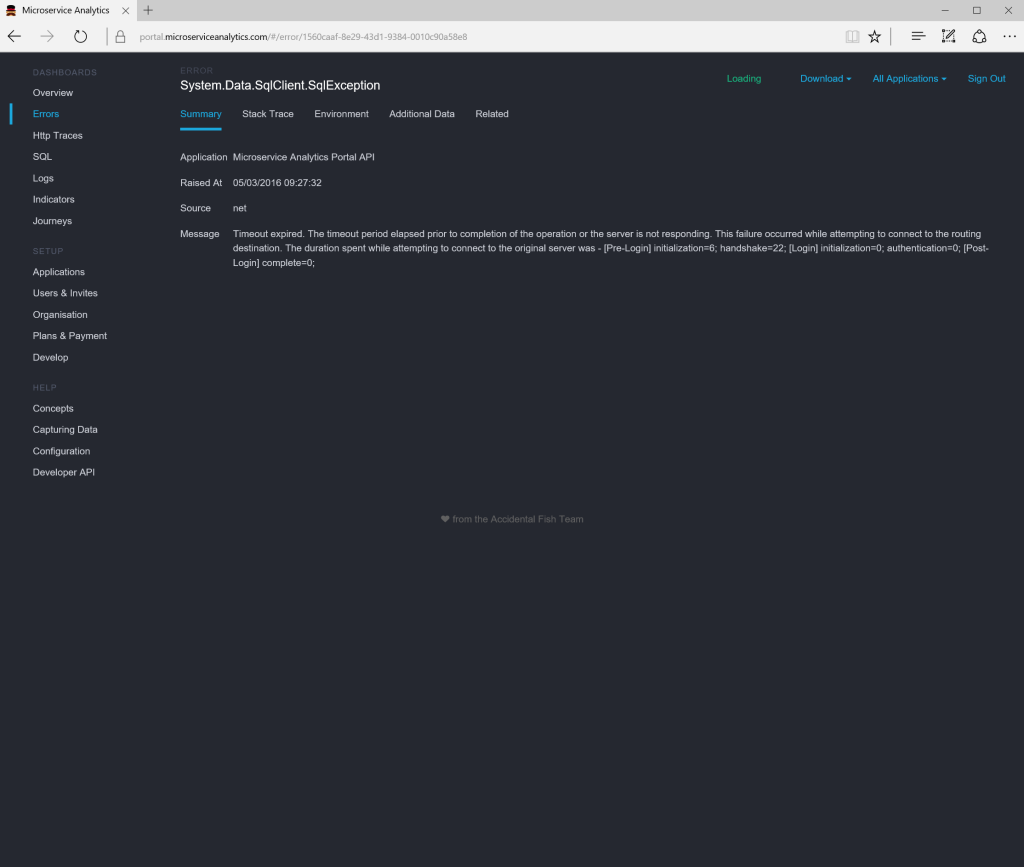

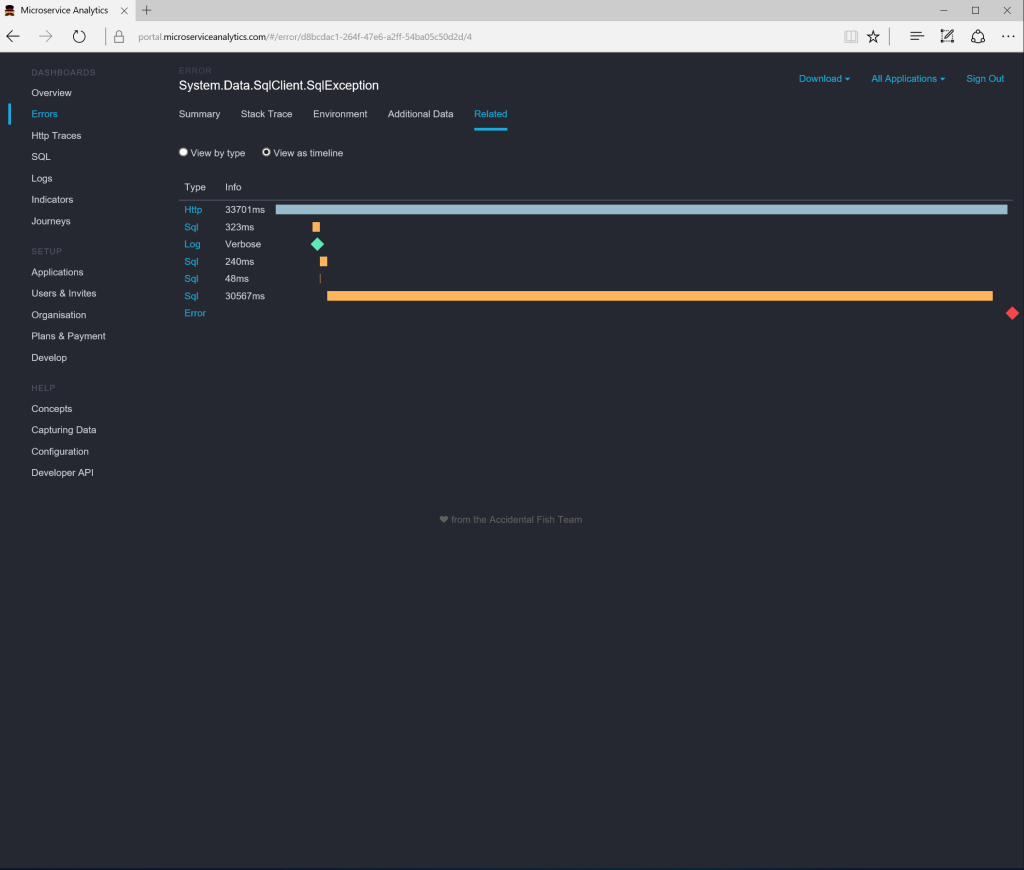

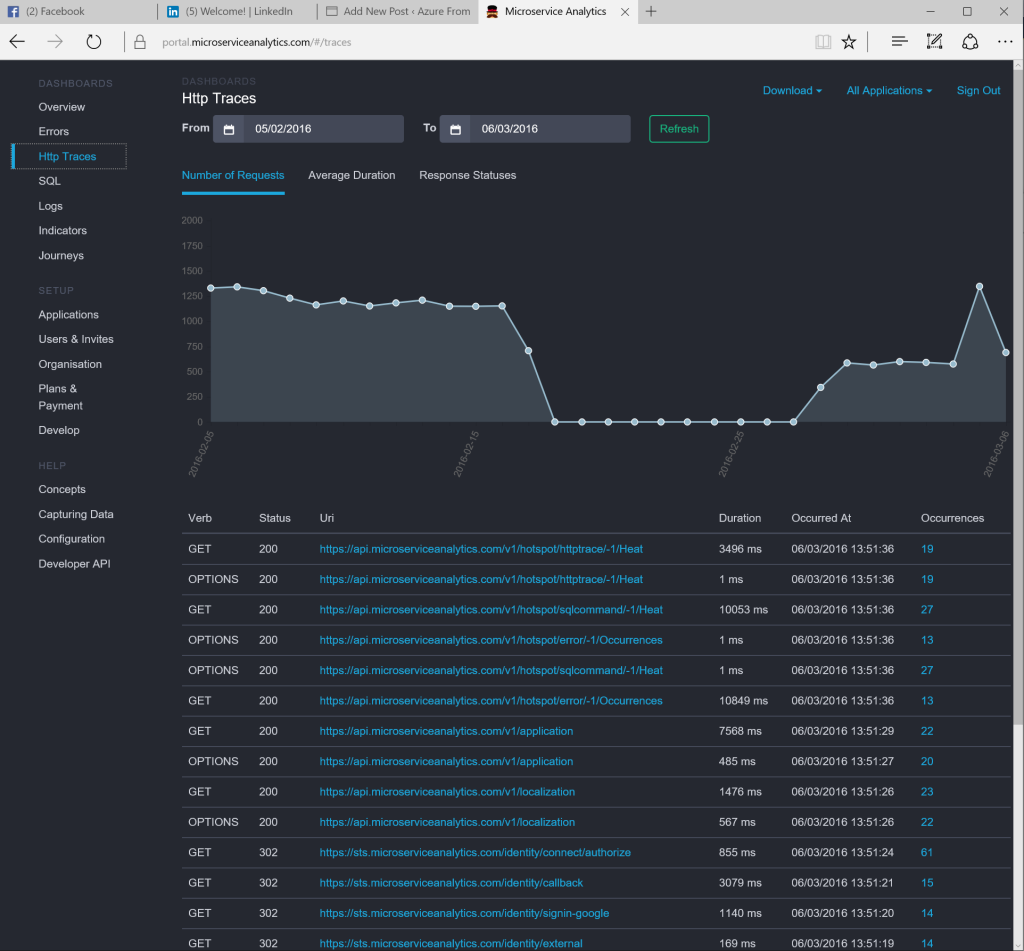

- When errors occur in the system I want to know everything that was happening around them across devices and servers and to be able to drill into it all and find related items.

- To understand how users are behaving and experiencing the system and to be able to jump off and drill into areas of interest.

I’ve been dogfooding on it for a while now and the first part is pretty much ready to roll and the second part has most of the plumbing in place and is getting expanded out over the next few weeks. It’s tentatively called Microservice Analytics and there are some screenshots below to whet your appetite.

It’s one of those projects that is almost endless in terms of where you might take it (I’ve got a list of enhancements as long as my arm just from my own usage) but at some point I need some feedback and to figure out it its useful to anyone but me and so I’m just starting to issue beta invite codes.

If you’re interested get in touch with a comment below.

Recent Comments