Over the last 4 parts of this series we’ve taken a simple application built around a layered architecture and restructured it into an application based around dispatching queries and commands as state through a mediator.

We’ve seen many of the advantages this can bring to a codebase reducing repetition and allowing for a clear decomposition into business, or service, oriented modules.

In this final part I’ll demonstrate how this pattern can support an application through the various stages of it’s lifecycle. The early stages of a software development project are often susceptible to a high degree of change. If it’s a new product under development then the challenge is often around establishing market fit (be that internal or external) without burning through the entire budget. Additionally if the problem domain is new it’s likely that the first attempt at drawing out bounded contexts will contain errors and if the system is built as fully isolated components change can be expensive. In either case keeping the cost of development and change low in the early phases of the project can lead to much more effective use of a projects budget.

In the system we’ve been developing we’ve developed three sub-systems: a checkout, a shopping cart and a product store – essentially we have a modular monolith.

In this part we’re going to assume that we’re finding that our product store is coming under a lot of strain and we are going to pull it out into a micro-service so that we can scale it independently. And we’re going to make this change without altering any consuming business logic code at all.

In our system we make use of the store in two places through the dispatch of GetStoreProductQuery queries. Firstly it is represented in the primary API as an endpoint that can be called by clients in the ProductController class:

[Route("api/[controller]")]

public class ProductController : AbstractCommandController

{

public ProductController(ICommandDispatcher dispatcher) : base(dispatcher)

{

}

[HttpGet("{productId}")]

[ProducesResponseType(typeof(StoreProduct), 200)]

public async Task<IActionResult> Get([FromRoute] GetStoreProductQuery query) => await ExecuteCommand(query);

}

Secondly it is also used to provide validation of products within the handler for the AddToCartCommand in the AddToCartCommandHandler class:

public async Task<CommandResponse> ExecuteAsync(AddToCartCommand command, CommandResponse previousResult)

{

Model.ShoppingCart cart = await _repository.GetActualOrDefaultAsync(command.AuthenticatedUserId);

StoreProduct product = (await _dispatcher.DispatchAsync(new GetStoreProductQuery{ProductId = command.ProductId})).Result;

if (product == null)

{

_logger.LogWarning("Product {0} can not be added to cart for user {1} as it does not exist", command.ProductId, command.AuthenticatedUserId);

return CommandResponse.WithError($"Product {command.ProductId} does not exist");

}

List<ShoppingCartItem> cartItems = new List<ShoppingCartItem>(cart.Items);

cartItems.Add(new ShoppingCartItem

{

Product = product,

Quantity = command.Quantity

});

cart.Items = cartItems;

await _repository.UpdateAsync(cart);

return CommandResponse.Ok();

}

To make our change the first thing we need to do is to be able to execute our command inside a different host – we’ll use an Azure Function that accepts the ProductID required by ourGetStoreProductQuery query. The code for this function is shown below:

public static class GetStoreProduct

{

private static readonly IServiceProvider ServiceProvider;

private static readonly AsyncLocal<ILogger> Logger = new AsyncLocal<ILogger>();

static GetStoreProduct()

{

IServiceCollection serviceCollection = new ServiceCollection();

MicrosoftDependencyInjectionCommandingResolver resolver = new MicrosoftDependencyInjectionCommandingResolver(serviceCollection);

ICommandRegistry registry = resolver.UseCommanding();

serviceCollection.UseCoreCommanding(resolver);

serviceCollection.UseStore(() => ServiceProvider, registry, ApplicationModeEnum.Server);

serviceCollection.AddTransient((sp) => Logger.Value);

ServiceProvider = resolver.ServiceProvider = serviceCollection.BuildServiceProvider();

}

[FunctionName("GetStoreProduct")]

public static async Task<IActionResult> Run([HttpTrigger(AuthorizationLevel.Anonymous, "get", "post", Route = null)]HttpRequest req, ILogger logger)

{

Logger.Value = logger;

logger.LogInformation("C# HTTP trigger function processed a request.");

IDirectCommandExecuter executer = ServiceProvider.GetService<IDirectCommandExecuter>();

GetStoreProductQuery query = new GetStoreProductQuery

{

ProductId = Guid.Parse(req.GetQueryParameterDictionary()["ProductId"])

};

CommandResponse<StoreProduct> result = await executer.ExecuteAsync(query);

return new OkObjectResult(result);

}

}

Our static constructor sets up our IoC container (Azure Functions actually run on app service instances and you can share state between them – though their are few guarantees and you can debate at length how “serverless” this makes things – AWS Lambda is much the same) and should be fairly familiar code by now.

Our function entry point does something different – it creates an instance of our GetStoreProductQuery from the query parameters supplied but rather than dispatch it through the ICommandDispatcher interface we’ve seen before it executes it using a reference to a IDirectCommandExecuter resolved from our IoC container. This instructs the command framework to execute the command without any dispatch semantics – that means that any logging of dispatch portions of the command flow won’t be replicated by this function and it is slightly more efficient (it’s worth noting that you can dispatch again here if you need to – though generally you would take the approach I am showing here).

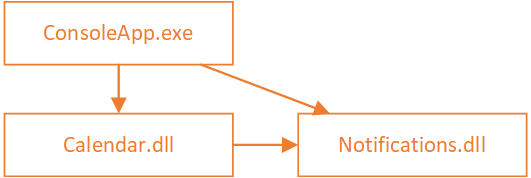

To support this new approach I’ve also made a change to the IServiceCollectionsExtensions UseStore registration method inside the Store.Application project so that it can be supplied an enum that determines how our command should be handled: in process (as we’ve been doing up until now), as a client of a remote service, or as a server (as we have done above). The enum is used to register the command in one of two ways and this is the key change to the existing change that enables us to remote the command:

if (applicationMode == ApplicationModeEnum.InProcess || applicationMode == ApplicationModeEnum.Server)

{

commandRegistry.Register<GetStoreProductQueryHandler>();

}

else if (applicationMode == ApplicationModeEnum.Client)

{

// this configures the command dispatcher to send the command over HTTP and wait for the result

Uri functionUri = new Uri("http://localhost:7071/api/GetStoreProduct");

commandRegistry.Register<GetStoreProductQuery, CommandResponse<StoreProduct>>(() =>

{

IHttpCommandDispatcherFactory httpCommandDispatcherFactory = serviceProvider().GetService<IHttpCommandDispatcherFactory>();

return httpCommandDispatcherFactory.Create(functionUri, HttpMethod.Get);

});

}

Both the in-process and server mode continue to register the handler as they have done before however when the application mode is set to client the registration takes a different form. Rather than register the handler we supply the type of the command and the type of the result as generic type parameters but then we setup a lambda that will resolve an instance of a IHttpCommandDispatcherFactory and create a HTTP dispatcher with the URI of the function and the HTTP verb to use. These interfaces can be found within the NuGet package AzureFromTheTrenches.Commanding.Http which I’ve added to the Store.Application project.

Registering in this way instructs the commanding system to dispatch the command using the, in this case, HTTP dispatcher rather than attempt to execute it locally. All the other framework features around the dispatch process continue to behave as usual and as we saw earlier you can pick this up on the other side of the HTTP call with the IDirectCommandExecuter.

I have shifted some other code around inside the solution to support code sharing with the Azure Function but that is really the extent of the code change. We’ve changed no business logic or consuming application code – we’ve simply moved where the command runs and the calling semantics are seamless – and essentially split the store out as a micro-service running inside an Azure Function. As long as you build your sub-systems as isolated units as we have here this same approach can be used with queues and other forms of remote call.

I’ve found this approach to be massively powerful – in the early stages of a project you can make changes within a codebase and with an operational environment that is fairly simple and is easy to manage and supported by tooling and as long as you have the tests to go with it refactoring a solution like this is really simple and is supported by tools like Resharper. Then, as you begin to lock things down or the solution grows, you can pull out the sub-systems into fully independent micro services without significant code change – it’s largely just configuration as we’ve seen above.

I wrote the commanding framework I’ve been using specifically to enable this approach and you can find it, and documentation, on GitHub here.

I hope this series has been interesting and presented (or refreshed) a different way of thinking about C# application architecture. There’s a fair chance I’ll swing back round and talk a bit about commanding result caching and some other scenarios that this approach enables so watch this space.

In the meantime if you have any questions about the approach or my commanding framework please do get in touch over on Twitter.

Finally the code for this final part can be found on GitHub here:

https://github.com/JamesRandall/CommandMessagePatternTutorial/tree/master/Part5

Recent Comments