In the last post we’d converted our traditional layered architecture into one that instead organised itself around business domains and made use of command and mediator patterns to promote a more loosely coupled approach. However things were definitely still very much a work in progress with our controllers still fairly scruffy and our command handlers largely just a copy and paste of our services from the layered system.

In this post I’m going to concentrate on simplifying the controllers and undertake some minor rework on our commands to help with this. This part is going to be a bit more code heavy than the last and will involve diving into parts of ASP.Net Core. The code for the result of all these changes can be found on GitHub here:

https://github.com/JamesRandall/CommandMessagePatternTutorial/tree/master/Part2

As a reminder our aim is to move from controller with actions that look like this:

[HttpPut("{productId}/{quantity}")]

public async Task<IActionResult> Put(Guid productId, int quantity)

{

CommandResponse response = await _dispatcher.DispatchAsync(new AddToCartCommand

{

UserId = this.GetUserId(),

ProductId = productId,

Quantity = quantity

});

if (response.IsSuccess)

{

return Ok();

}

return BadRequest(response.ErrorMessage);

}

To controllers with actions that look like this:

[HttpPut("{productId}/{quantity}")]

public async Task<IActionResult> Put([FromRoute] AddToCartCommand command) => await ExecuteCommand(command);

And we want to do this in a way that makes adding future controllers and commands super simple and reliable.

We can do this fairly straightforwardly as we now only have a single “service”, our dispatcher, and our commands are simple state – this being the case we can use some ASP.Net Cores model binding to take care of populating our commands along with some conventions and a base class to do the heavy lifting for each of our controllers. This focuses the complexity in a single, testable, place and means that our controllers all become simple and thin.

If we’re going to use model binding to construct our commands the first thing we need to be wary of is security: many of our commands have a UserId property that, as can be seen in the code snippet above, is set within the action from a claim. Covering claims based security and the options available in ASP.Net Core is a topic in and of itself and not massively important to the application architecture and code structure we’re focusing on for the sake of simplicty I’m going to use a hard coded GUID, however hopefully its clear from the code below where this ID would come from in a fully fledged solution:

public static class ControllerExtensions

{

public static Guid GetUserId(this Controller controller)

{

// in reality this would pull the user ID from the claims e.g.

// return Guid.Parse(controller.User.FindFirst("userId").Value);

return Guid.Parse("A9F7EE3A-CB0D-4056-9DB5-AD1CB07D3093");

}

}

If we’re going to use model binding we need to be extremely careful that this ID cannot be set by a consumer as this would almost certainly lead to a security incident. In fact if we make an interim change to our controller action we can see immediately that we’ve got a problem:

[HttpPut("{productId}/{quantity}")]

public async Task<IActionResult> Put([FromRoute]AddToCartCommand command)

{

CommandResponse response = await _dispatcher.DispatchAsync(command);

if (response.IsSuccess)

{

return Ok();

}

return BadRequest(response.ErrorMessage);

}

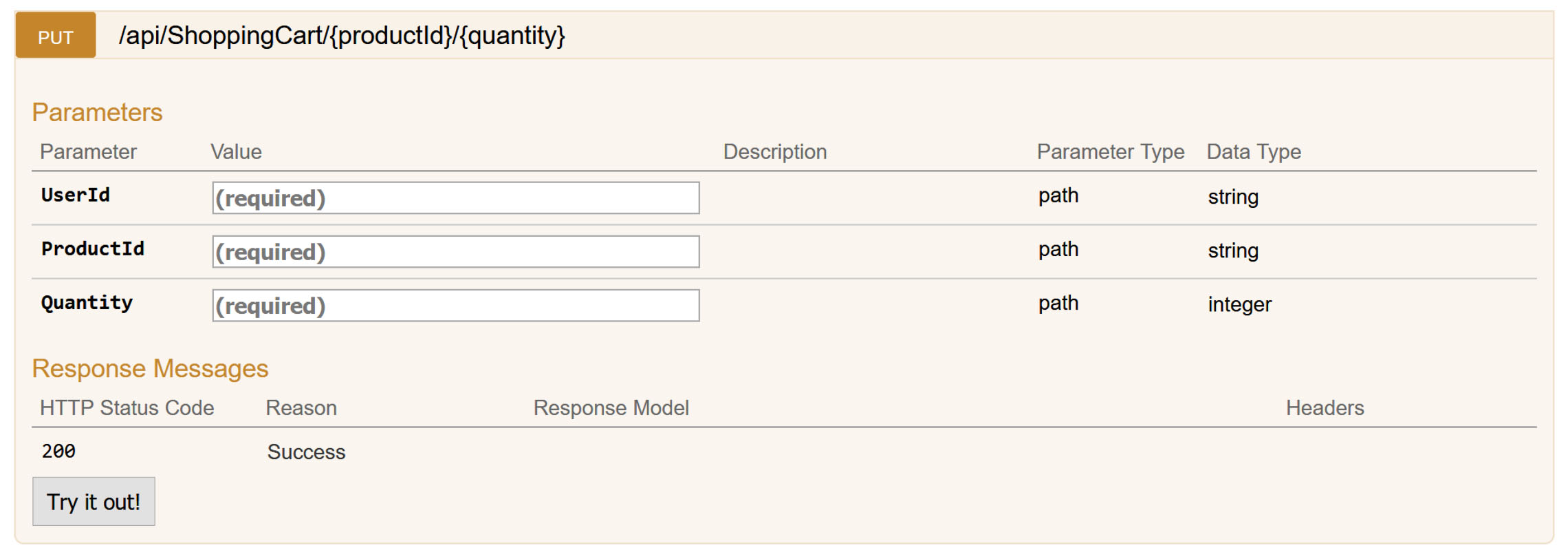

The user ID has bled through into the Swagger definition but, because of how we’ve configured the routing with no userId parameter on the route definition, the binder will ignore any value we try and supply: there is nowhere to specify it as a route parameter and a query parameter or request body will be ignored. Still – it’s not pleasant: it’s misleading to the consumer of the API and if, for example, we were reading the command from the request body the model binder would pick it up.

We’re going to take a three pronged approach to this so that we can robustly prevent this happening in all scenarios. Firstly, to support some of these changes, we’re going to rename the UserId property on our commands to AuthenticatedUserId and introduce an interface called IUserContextCommand as shown below that all our commands that require this information will implement:

public interface IUserContextCommand

{

Guid AuthenticatedUserId { get; set; }

}

With that change made our AddToCartCommand now looks like this:

public class AddToCartCommand : ICommand<CommandResponse>, IUserContextCommand

{

public Guid AuthenticatedUserId { get; set; }

public Guid ProductId { get; set; }

public int Quantity { get; set; }

}

I’m using the Swashbuckle.AspNetCore package to provide a Swagger definition and user interface and fortunately it’s quite a configurable package that will allow us to customise how it interprets actions (operations in it’s parlance) and schema through a filter system. We’re going to create and register both an operation and schema filter that ensures any reference to AuthenticatedUserId either inbound or outbound is removed. The first code snippet below will remove the property from any schema (request or response bodies) and the second snippet will remove it from any operation parameters – if you use models with the [FromRoute] attribute as we have done then Web API will correctly only bind to any parameters specified in the route but Swashbuckle will still include all the properties in the model in it’s definition.

public class SwaggerAuthenticatedUserIdFilter : ISchemaFilter

{

private const string AuthenticatedUserIdProperty = "authenticatedUserId";

private static readonly Type UserContextCommandType = typeof(IUserContextCommand);

public void Apply(Schema model, SchemaFilterContext context)

{

if (UserContextCommandType.IsAssignableFrom(context.SystemType))

{

if (model.Properties.ContainsKey(AuthenticatedUserIdProperty))

{

model.Properties.Remove(AuthenticatedUserIdProperty);

}

}

}

}

public class SwaggerAuthenticatedUserIdOperationFilter : IOperationFilter

{

private const string AuthenticatedUserIdProperty = "authenticateduserid";

public void Apply(Operation operation, OperationFilterContext context)

{

IParameter authenticatedUserIdParameter = operation.Parameters?.SingleOrDefault(x => x.Name.ToLower() == AuthenticatedUserIdProperty);

if (authenticatedUserIdParameter != null)

{

operation.Parameters.Remove(authenticatedUserIdParameter);

}

}

}

These are registered in our Startup.cs file alongside Swagger itself:

public void ConfigureServices(IServiceCollection services)

{

services.AddMvc();

services.AddSwaggerGen(c =>

{

c.SwaggerDoc("v1", new Info { Title = "Online Store API", Version = "v1" });

c.SchemaFilter<SwaggerAuthenticatedUserIdFilter>();

c.OperationFilter<SwaggerAuthenticatedUserIdOperationFilter>();

});

CommandingDependencyResolver = new MicrosoftDependencyInjectionCommandingResolver(services);

ICommandRegistry registry = CommandingDependencyResolver.UseCommanding();

services

.UseShoppingCart(registry)

.UseStore(registry)

.UseCheckout(registry);

}

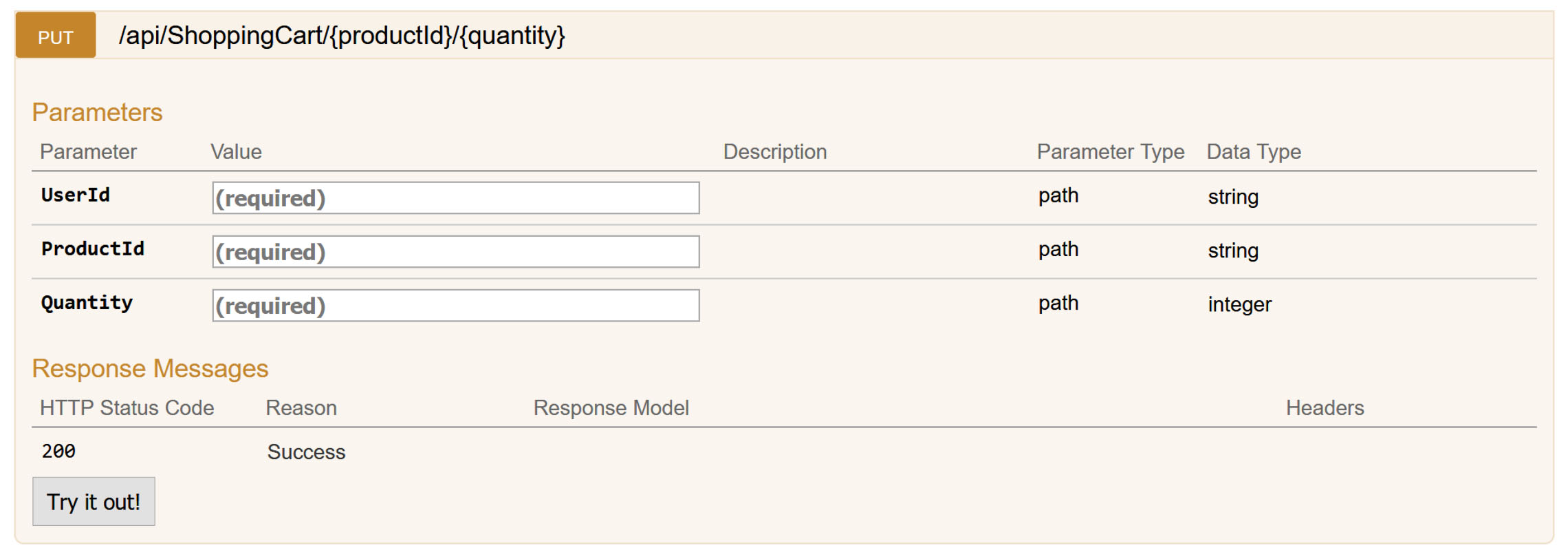

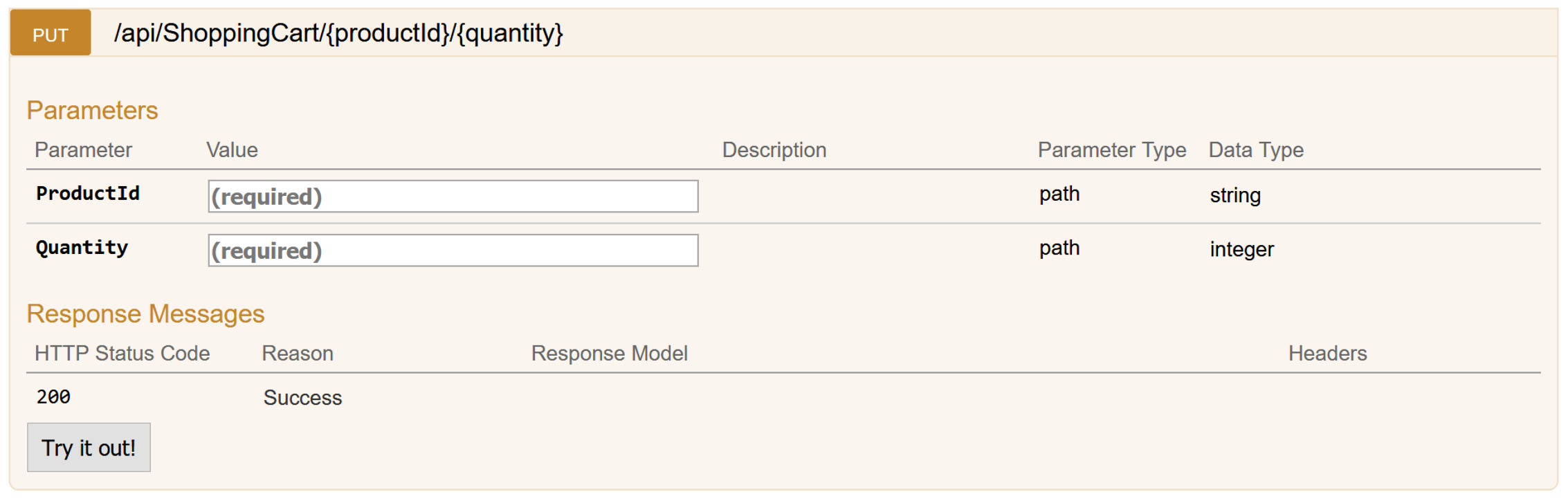

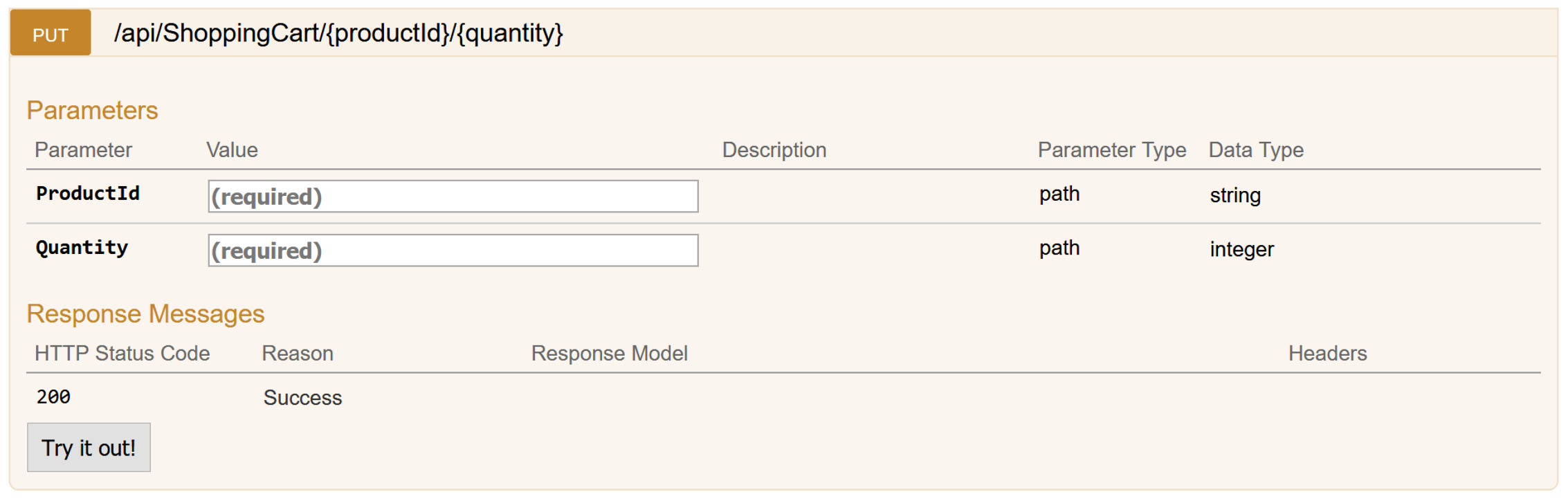

If we try this now we can see that our action now looks as we expect:

With our Swagger work we’ve at least obfuscated our AuthenticatedUserId property and now we want to make sure ASP.Net ignores it during binding operations. A common means of doing this is to use the [BindNever] attribute on models however we want our commands to remain free of the concerns of the host environment, we don’t want to have to remember to stamp [BindNever] on all of our models, and we definetly don’t want the assemblies that contain them to need to reference ASP.Net packages – after all one of the aims of taking this approach is to promote a very loosely coupled design.

This being the case we need to find another way and other than the binding attributes possibly the easiest way to prevent user IDs being set from a request body is to decorate the default body binder with some additional functionality that forces an empty GUID. Binders consist of two parts, the binder itself and a binder provider so to add our binder we need two new classes (and we also include a helper class with an extension method). Firstly our custom binder:

public class AuthenticatedUserIdAwareBodyModelBinder : IModelBinder

{

private readonly IModelBinder _decoratedBinder;

public AuthenticatedUserIdAwareBodyModelBinder(IModelBinder decoratedBinder)

{

_decoratedBinder = decoratedBinder;

}

public async Task BindModelAsync(ModelBindingContext bindingContext)

{

await _decoratedBinder.BindModelAsync(bindingContext);

if (bindingContext.Result.Model is IUserContextCommand command)

{

command.AuthenticatedUserId = Guid.Empty;

}

}

}

You can see from the code above that we delegate all the actual binding down to the decorated binder and then simply check to see if we are dealing with a command that implements our IUserContextCommand interface and blanks out the GUID if neecssary.

We then need a corresponding provider to supply this model binder:

internal static class AuthenticatedUserIdAwareBodyModelBinderProviderInstaller

{

public static void AddAuthenticatedUserIdAwareBodyModelBinderProvider(this MvcOptions options)

{

IModelBinderProvider bodyModelBinderProvider = options.ModelBinderProviders.Single(x => x is BodyModelBinderProvider);

int index = options.ModelBinderProviders.IndexOf(bodyModelBinderProvider);

options.ModelBinderProviders.Remove(bodyModelBinderProvider);

options.ModelBinderProviders.Insert(index, new AuthenticatedUserIdAwareBodyModelBinderProvider(bodyModelBinderProvider));

}

}

internal class AuthenticatedUserIdAwareBodyModelBinderProvider : IModelBinderProvider

{

private readonly IModelBinderProvider _decoratedProvider;

public AuthenticatedUserIdAwareBodyModelBinderProvider(IModelBinderProvider decoratedProvider)

{

_decoratedProvider = decoratedProvider;

}

public IModelBinder GetBinder(ModelBinderProviderContext context)

{

IModelBinder modelBinder = _decoratedProvider.GetBinder(context);

return modelBinder == null ? null : new AuthenticatedUserIdAwareBodyModelBinder(_decoratedProvider.GetBinder(context));

}

}

Our installation extension method looks for the default BodyModelBinderProvider class, extracts it, constructs our decorator around it, and replaces it in the set of binders.

Again, like with our Swagger filters, we configure this in the ConfigureServices method of our Startup class:

// This method gets called by the runtime. Use this method to add services to the container.

public void ConfigureServices(IServiceCollection services)

{

services.AddMvc(c =>

{

c.AddAuthenticatedUserIdAwareBodyModelBinderProvider();

});

services.AddSwaggerGen(c =>

{

c.SwaggerDoc("v1", new Info { Title = "Online Store API", Version = "v1" });

c.SchemaFilter<SwaggerAuthenticatedUserIdFilter>();

c.OperationFilter<SwaggerAuthenticatedUserIdOperationFilter>();

});

CommandingDependencyResolver = new MicrosoftDependencyInjectionCommandingResolver(services);

ICommandRegistry registry = CommandingDependencyResolver.UseCommanding();

services

.UseShoppingCart(registry)

.UseStore(registry)

.UseCheckout(registry);

}

At this point we’ve configured ASP.Net so that consumers of the API cannot manipulate sensitive properties on our commands and so for our next step we want to ensure that the AuthenticatedUserId property on our commands is popualted with the legitimate ID of the logged in user.

While we could set this in our model binder (where currently we ensure it is blank) I’d suggest that’s a mixing of concerns and in any case will only be triggered on an action with a request body, it won’t work for a command bound from a route or query parameters for example. All that being the case I’m going to implement this through a simple action filter as below:

public class AssignAuthenticatedUserIdActionFilter : IActionFilter

{

public void OnActionExecuting(ActionExecutingContext context)

{

foreach (object parameter in context.ActionArguments.Values)

{

if (parameter is IUserContextCommand userContextCommand)

{

userContextCommand.AuthenticatedUserId = ((Controller) context.Controller).GetUserId();

}

}

}

public void OnActionExecuted(ActionExecutedContext context)

{

}

}

Action filters are run after model binding and authentication and authorization has occurred and so at this point we can be sure we can access the claims for a validated user.

Before we move on it’s worth reminding ourselves what our updated controller action now looks like:

[HttpPut("{productId}/{quantity}")]

public async Task<IActionResult> Put([FromRoute]AddToCartCommand command)

{

CommandResponse response = await _dispatcher.DispatchAsync(command);

if (response.IsSuccess)

{

return Ok();

}

return BadRequest(response.ErrorMessage);

}

To remove this final bit of boilerplate we’re going to introduce a base class that handles the dispatch and HTTP response interpretation:

public abstract class AbstractCommandController : Controller

{

protected AbstractCommandController(ICommandDispatcher dispatcher)

{

Dispatcher = dispatcher;

}

protected ICommandDispatcher Dispatcher { get; }

protected async Task<IActionResult> ExecuteCommand(ICommand<CommandResponse> command)

{

CommandResponse response = await Dispatcher.DispatchAsync(command);

if (response.IsSuccess)

{

return Ok();

}

return BadRequest(response.ErrorMessage);

}

}

With that in place we can finally get our controller to our target form:

[Route("api/[controller]")]

public class ShoppingCartController : AbstractCommandController

{

public ShoppingCartController(ICommandDispatcher dispatcher) : base(dispatcher)

{

}

[HttpPut("{productId}/{quantity}")]

public async Task<IActionResult> Put([FromRoute] AddToCartCommand command) => await ExecuteCommand(command);

// ... other verbs

}

To roll this out easily over the rest of our commands we’ll need to get them into a more consistent shape – at the moment they have a mix of response types which isn’t ideal for translating the results into HTTP responses. We’ll unify them so they all return a CommandResponse object that can optionally also contain a result object:

public class CommandResponse

{

protected CommandResponse()

{

}

public bool IsSuccess { get; set; }

public string ErrorMessage { get; set; }

public static CommandResponse Ok() { return new CommandResponse { IsSuccess = true};}

public static CommandResponse WithError(string error) { return new CommandResponse { IsSuccess = false, ErrorMessage = error};}

}

public class CommandResponse<T> : CommandResponse

{

public T Result { get; set; }

public static CommandResponse<T> Ok(T result) { return new CommandResponse<T> { IsSuccess = true, Result = result}; }

public new static CommandResponse<T> WithError(string error) { return new CommandResponse<T> { IsSuccess = false, ErrorMessage = error }; }

public static implicit operator T(CommandResponse<T> from)

{

return from.Result;

}

}

With all that done we can complete our AbstractCommandController class and convert all of our controllers to the simpler syntax. By making these changes we’ve removed all the repetitive boilerplate code from our controllers which means less tests to write and maintain, less scope for us to make mistakes and less scope for us to introduce inconsistencies. Instead we’ve leveraged the battle hardened model binding capabilities of ASP.Net and concentrated all of the our response handling in a single class that we can test extensively. And if we want to add new capabilities all we need to do is add commands, handlers and controllers that follow our convention and all the wiring is taken care of for us.

In the next part we’ll explore how we can add validation to our commands in a way that is loosely coupled and reusable in domains other than ASP.Net and then we’ll revisit our handlers to separate out our infrastructure code (logging for example) from our business logic.

Other Parts in the Series

Part 5

Part 4

Part 3

Part 1

Recent Comments