If you're looking for help with C#, .NET, Azure, Architecture, or would simply value an independent opinion then please get in touch here or over on Twitter.

Since I published this piece Microsoft have made significant improvements to HTTP scaling on Azure Functions and the below is out of date. Please see this post for a revised comparison.

I had a lot of interesting conversations and feedback following my recent post on scaling a serverless .NET application with Azure Functions and AWS Lambda. A common request was to also include Google Cloud Functions and a common comment was that the runtimes were not the same: .NET Core on AWS Lambda and .NET 4.6 on Azure Functions. In regard to the latter point I certainly agree this is not ideal but continue to contend that as these are your options for .NET and are fully supported and stated as scalable serverless runtimes by each vendor its worth understanding and comparing these platforms as that is your choice as a .NET developer. I’m also fairly sure that although the different runtimes might make a difference to outright raw response time, and therefore throughput and the ultimate amount of resource required, the scaling issues with Azure had less to do with the runtime and more to do with the surrounding serverless implementation.

Do I think a .NET Core function in a well architected serverless host will outperform a .NET Framework based function in a well architected serverless host? Yes. Do I think .NET Framework is the root cause of the scaling issues on Azure? No. In my view AWS Lambda currently has a superior way of managing HTTP triggered functions when compared to Azure and Azure is hampered by a model based around App Service plans.

Taking all that on board and wanting to better evidence or refute my belief that the scaling issues are more host than framework related I’ve rewritten the test subject as a tiny Node / JavaScript application and retested the platforms on this runtime – Node is supported by all three platforms and all three platforms are currently running Node JS 6.x.

My primary test continues to be a mixed light workload of CPU and IO (load three blobs from the vendors storage offering and then compile and run a handlebars template), the kind of workload its fairly typical to find in a HTTP function / public facing API. However I’ve also run some tests against “stock” functions – the vendor samples that simply return strings. Finally I’ve also included some percentile based data which I obtained using Apache Benchmark and I’ve covered off cold start scenarios.

I’ve also managed to normalise the axes this time round for a clearer comparison and the code and data can all be found on GitHub:

https://github.com/JamesRandall/serverlessJsScalingComparison

(In the last week AWS have also added full support for .NET Core 2.0 on Lambda – expect some data on that soon)

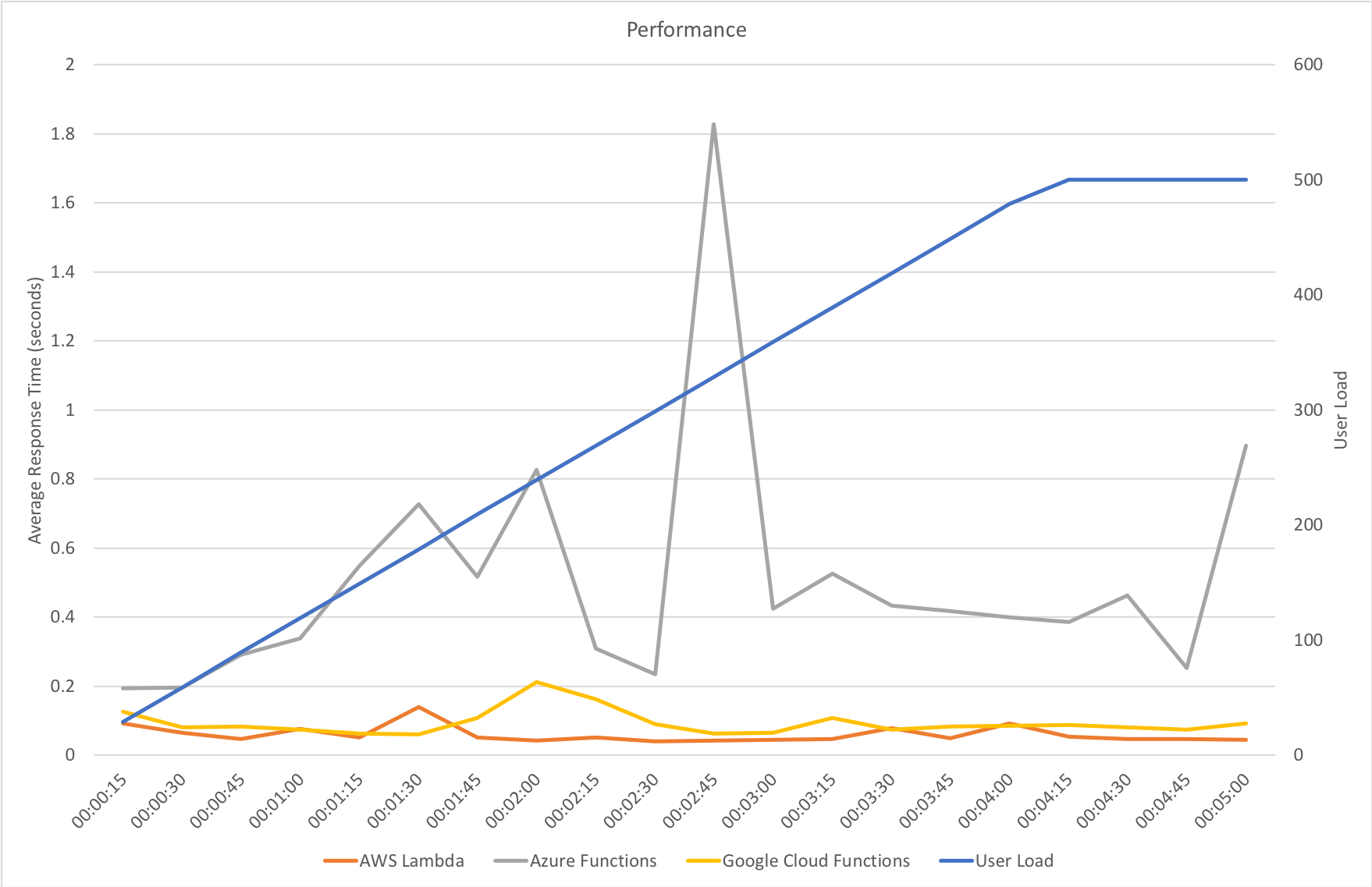

Gradual Ramp Up

This test case starts with 1 user and adds 2 users per second up to a maximum of 500 concurrent users to demonstrate a slow and steady increase in load.

The AWS and Azure results for JavaScript are very similar to those seen for .NET with Azure again struggling with response times and never really competing with AWS when under load. Both AWS and Azure exhibit faster response times when using JavaScript than .NET.

Google Cloud Functions run fairly close to AWS Lambda but can’t quite match it for response time and fall behinds on overall throughput where it sits closer to Azure’s results. Given the difference in response time this would suggest Azure is processing more concurrent incoming requests than Google allowing it to have a similar throughput after the dip Azure encounters at around the 2:30 mark – presumably Azure allocates more resource at that point. That dip deserves further attention and is something I will come back to in a future post.

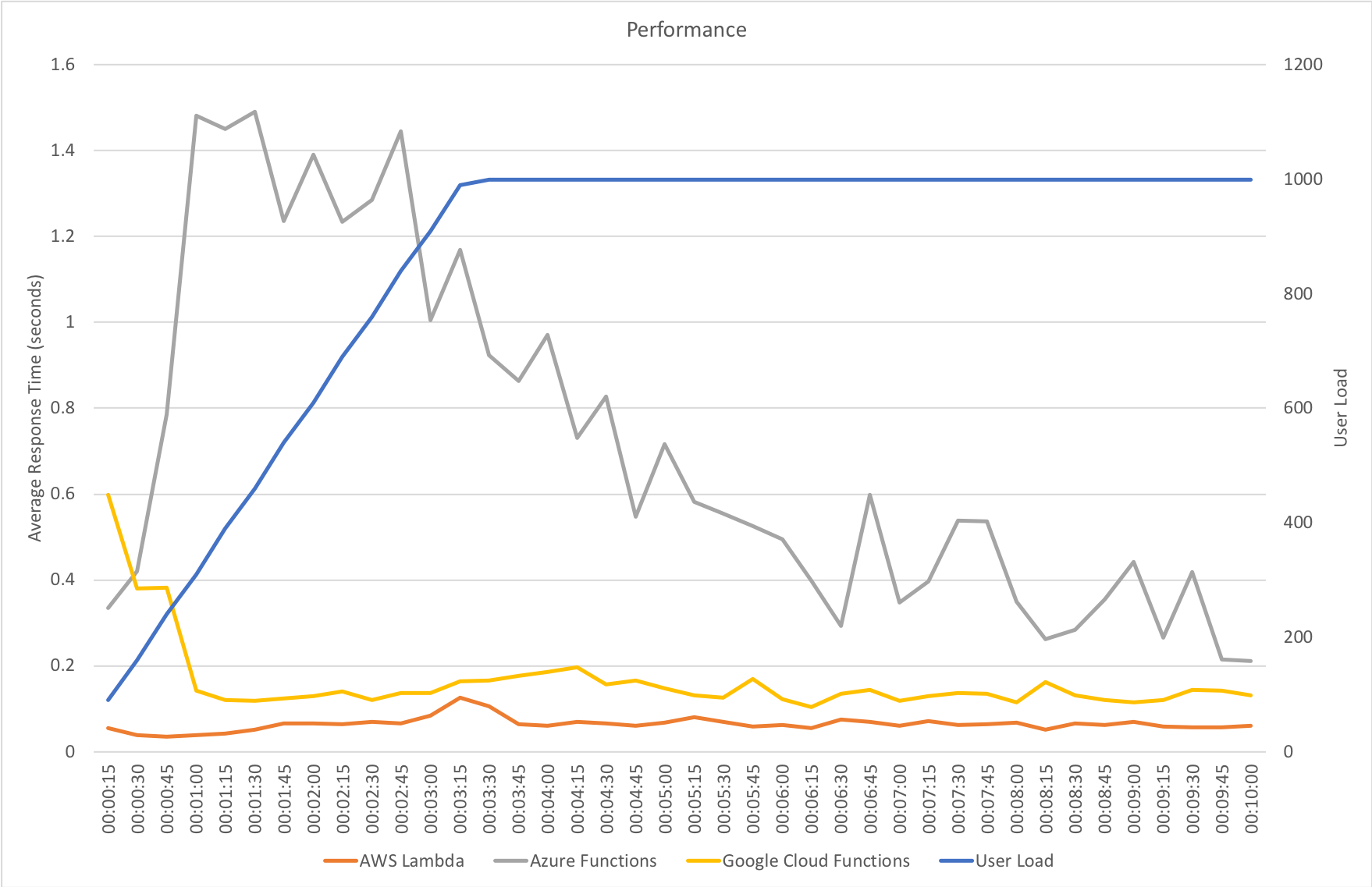

Rapid Ramp Up

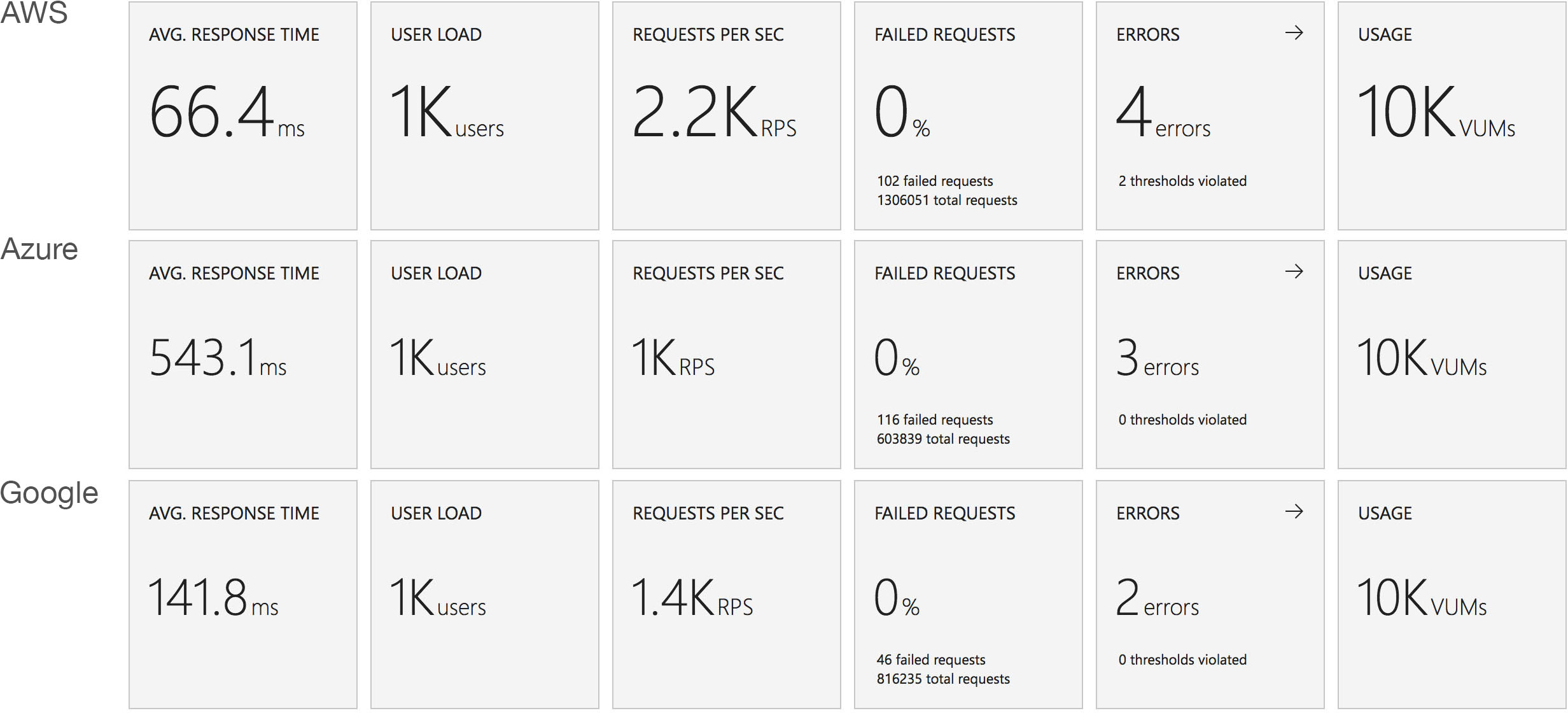

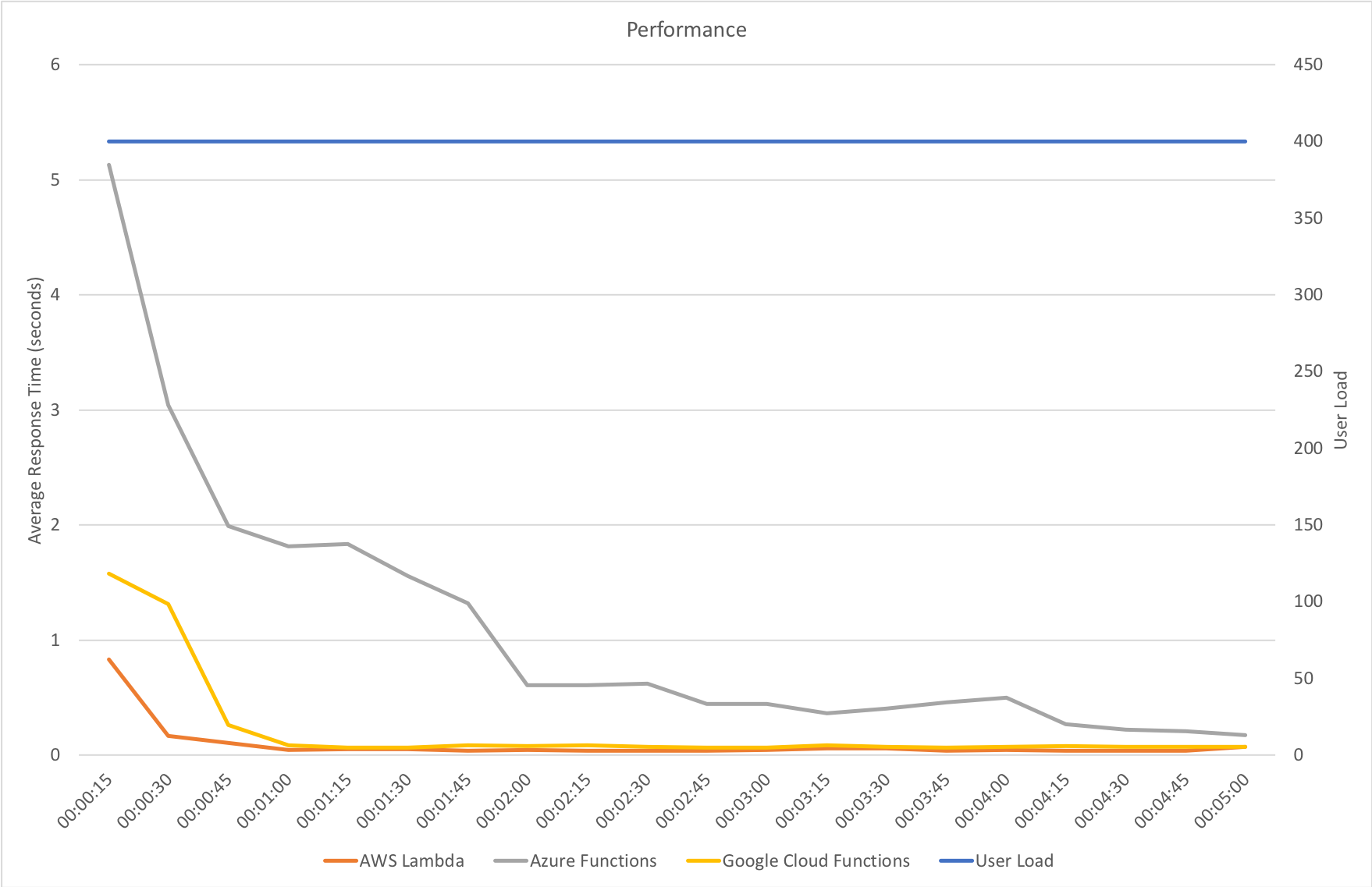

This test case starts with 10 users and adds 10 users every 2 seconds up to a maximum of 1000 concurrent users to demonstrate a more rapid increase in load and a higher peak concurrency.

Again AWS handles the increase in load very smoothly maintaining a low response time throughout and is the clear leader.

Azure struggles to keep up with this rate of request increase. Response times hover around the 1.5 second mark throughout the growth stage and gradually decrease towards something acceptable over the next 3 minutes. Throughput continues to climb over the full duration of the test run matching and perhaps slightly exceeding Google by the end but still some way behind Amazon.

Google has two quite distinctively sharp drops in response time early on in the growth stageas the load increases before quickly stabilising with a response time around 140ms and levels off with throughput in line with the demand at the end of the growth phase.

I didn’t run this test with .NET, instead hitting the systems with an immediate 1000 users, but nevertheless the results are inline with that test particularly once the growth phase is over.

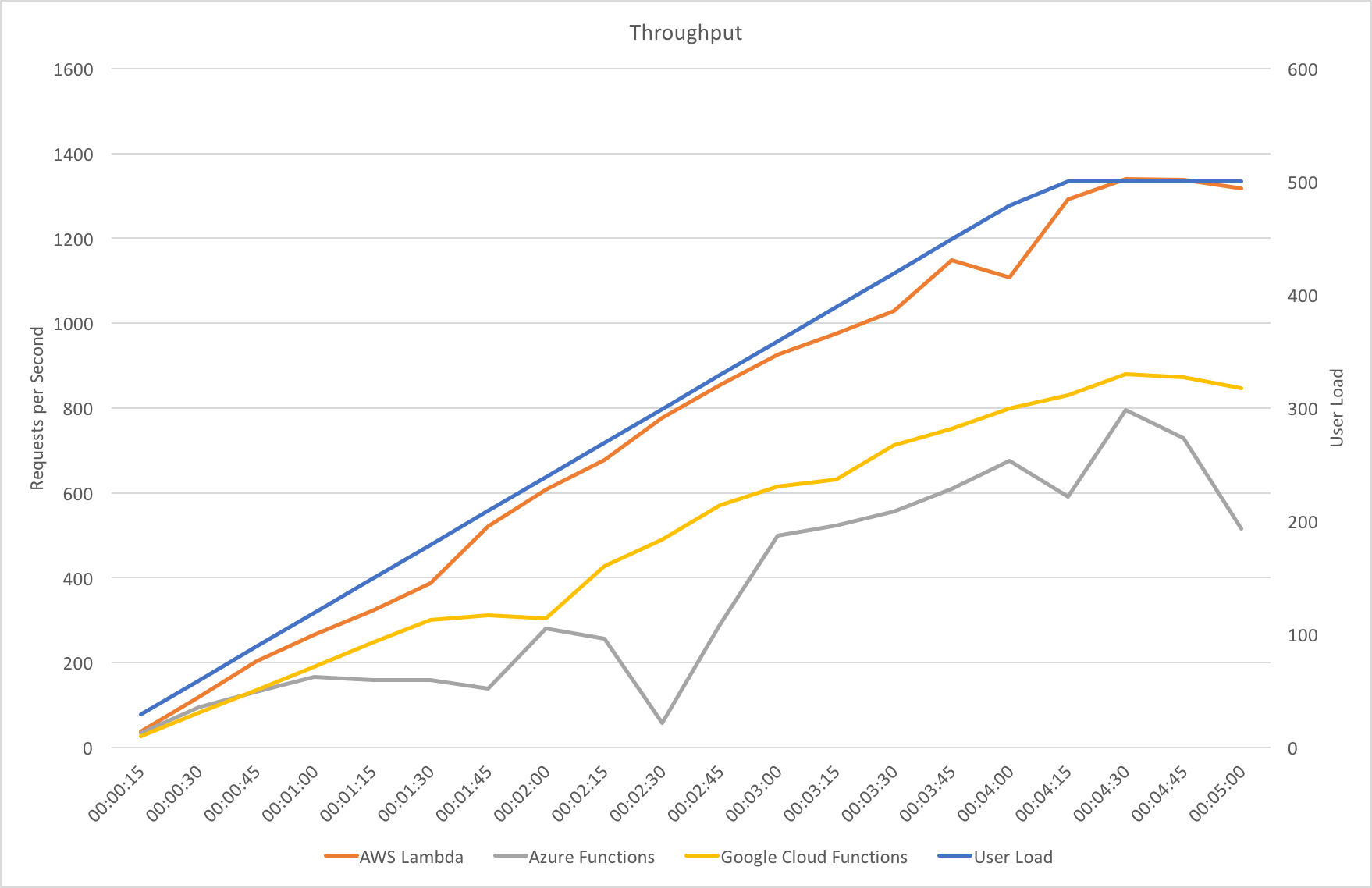

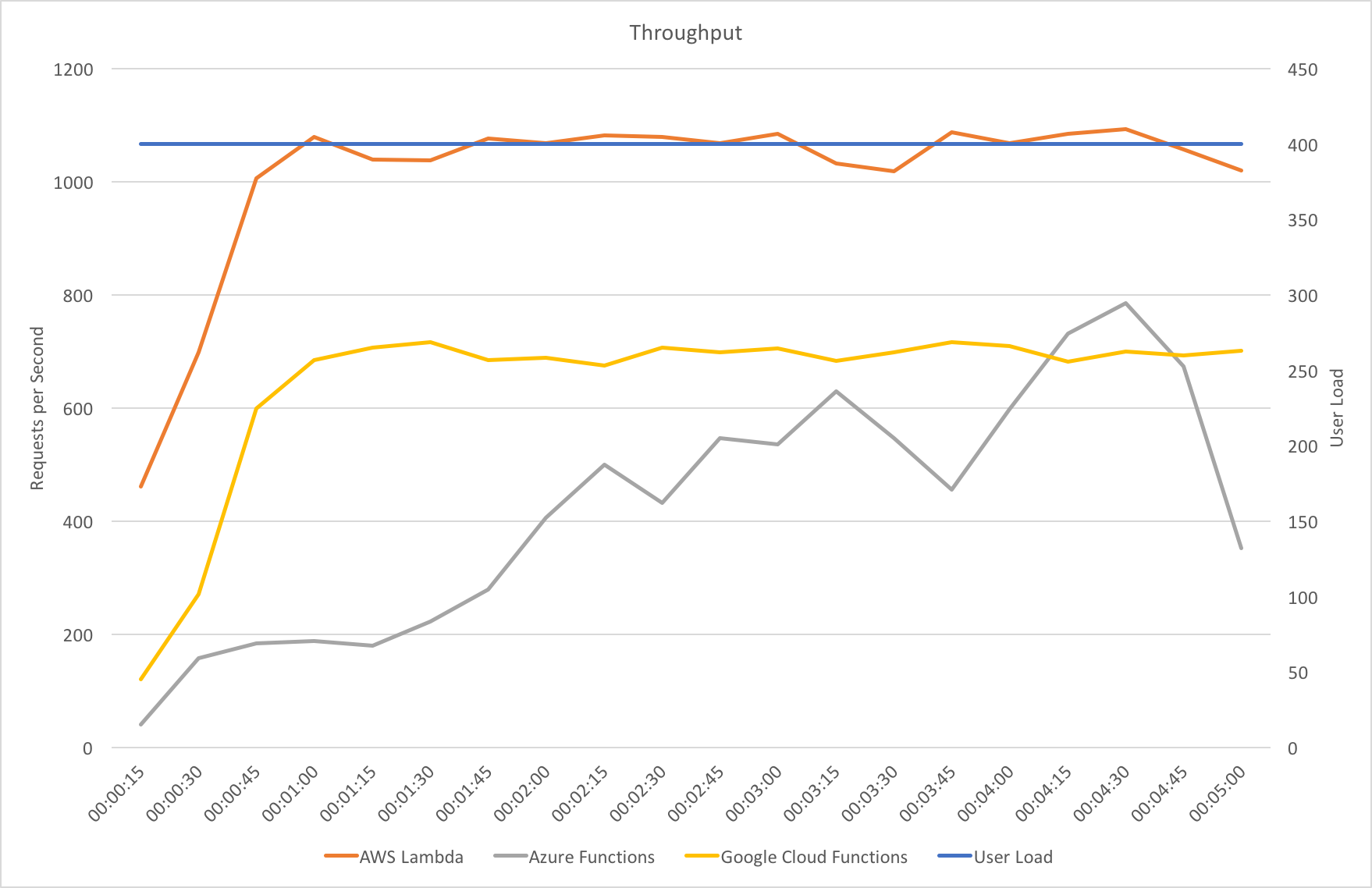

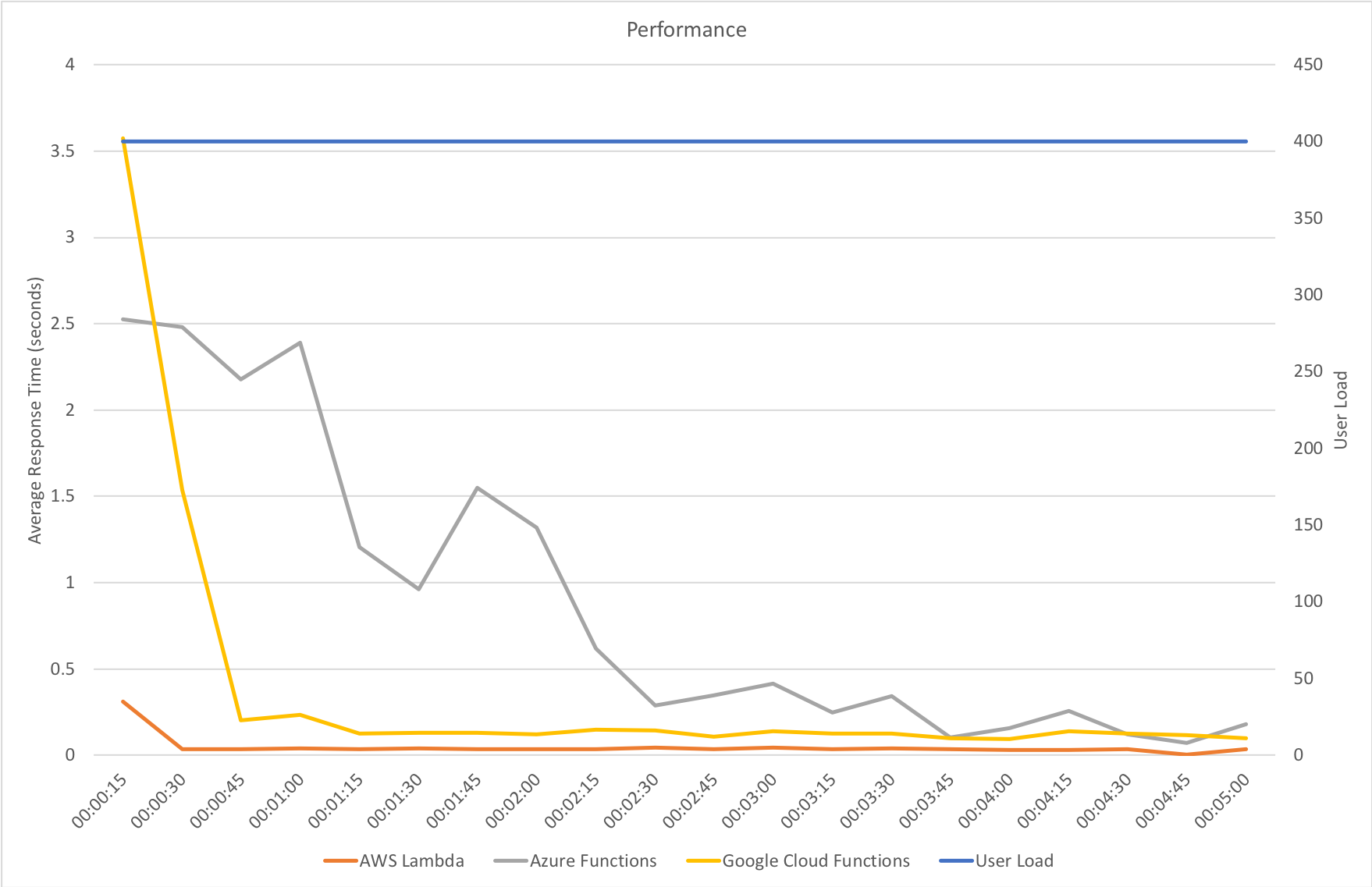

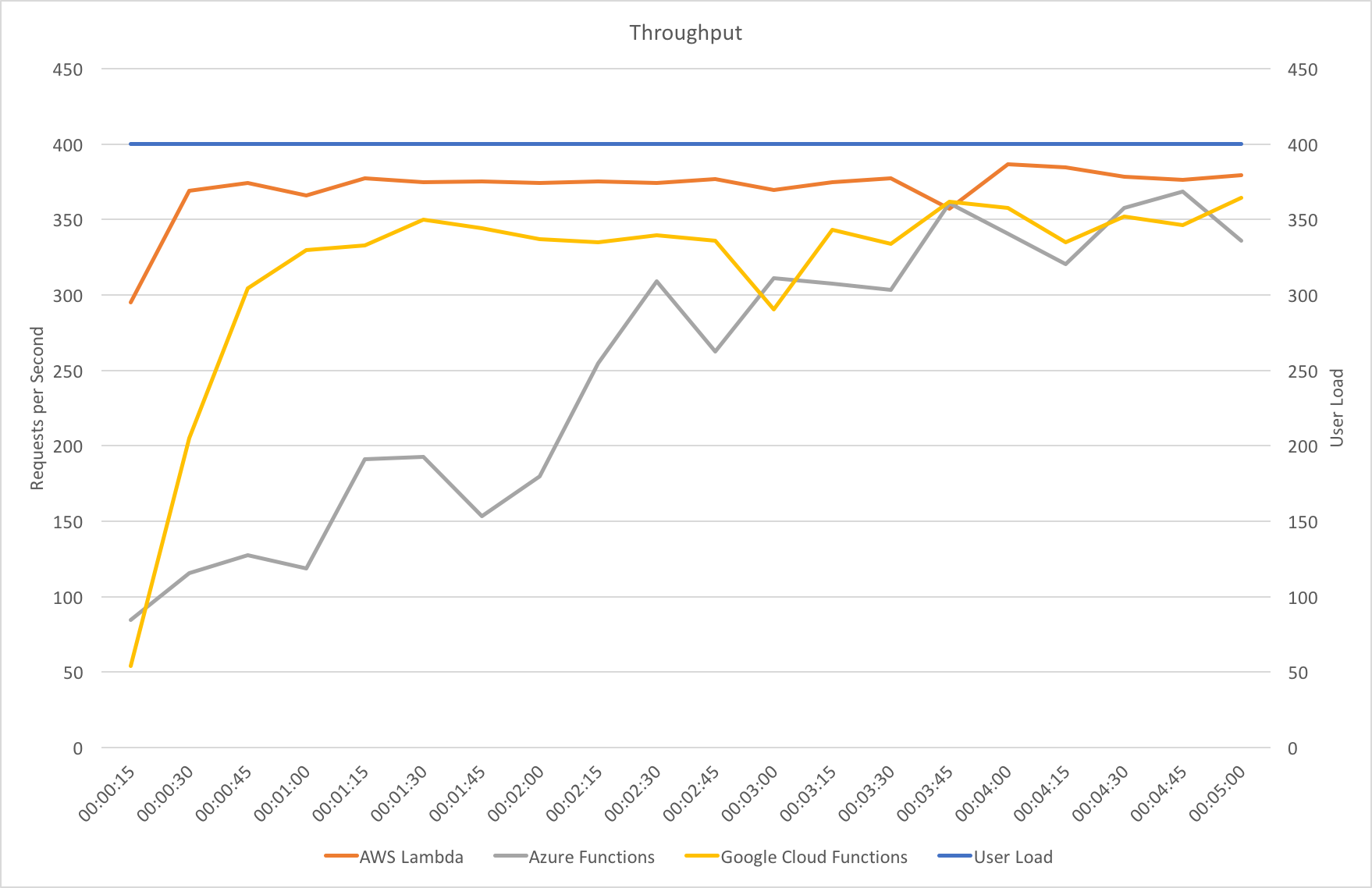

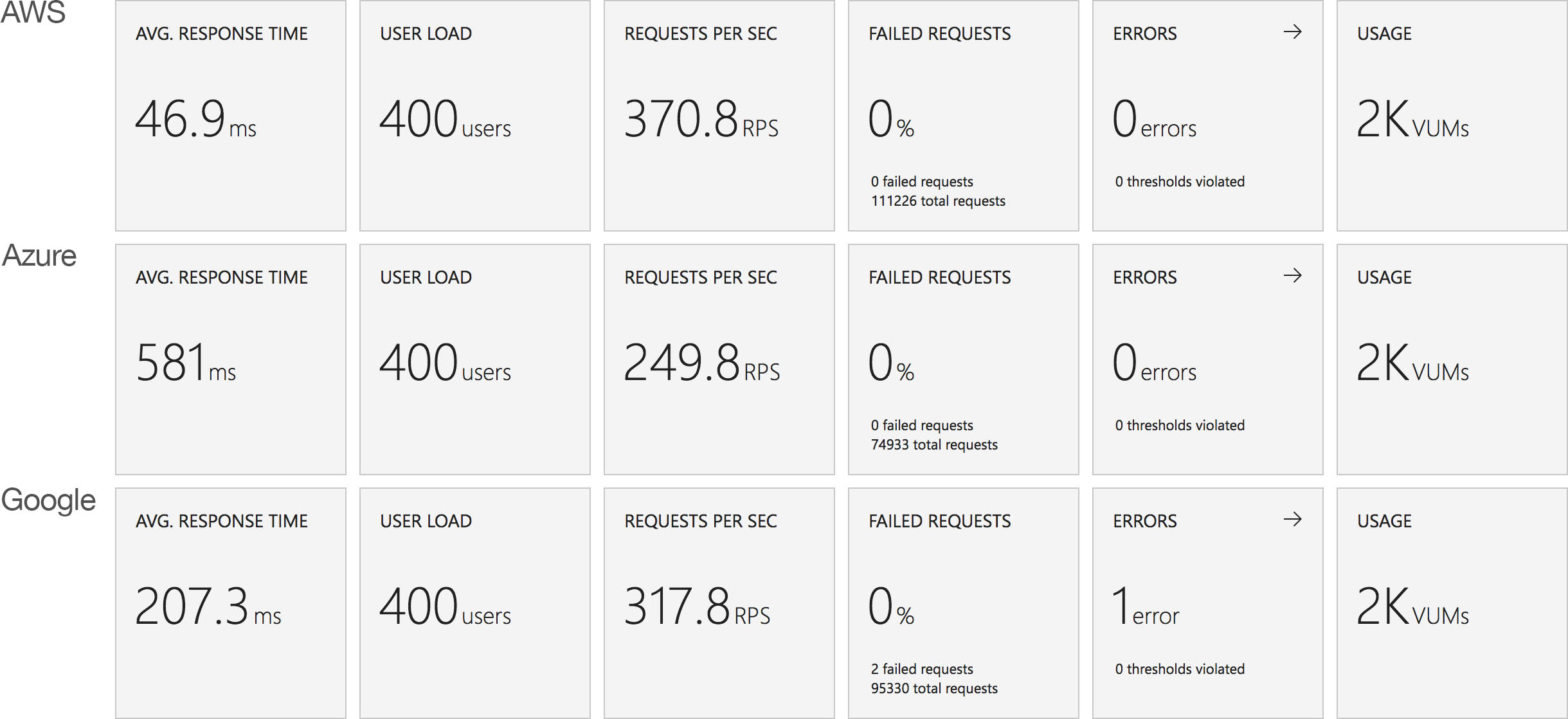

Immediate High Demand

This test case starts immediately with 400 concurrent users and stays at that level of load for 5 minutes demonstrating the response to a sudden spike in demand.

Both AWS and Google scale quickly to deal with the sudden demand both hitting a steady and low response time around the 1 minute mark but AWS is a clear leader in throughput – it is able to get through many more requests per second than Google due to its lower response time.

Azure again brings up the rear – it takes nearly 2 minutes to reach a steady response time that is markedly higher than both Google and AWS. Throughput continues to increase to the end of the test where it eventually peaks slightly ahead of Google but still some way behind AWS. It then experiences a fall off which is difficult to explain from the data available.

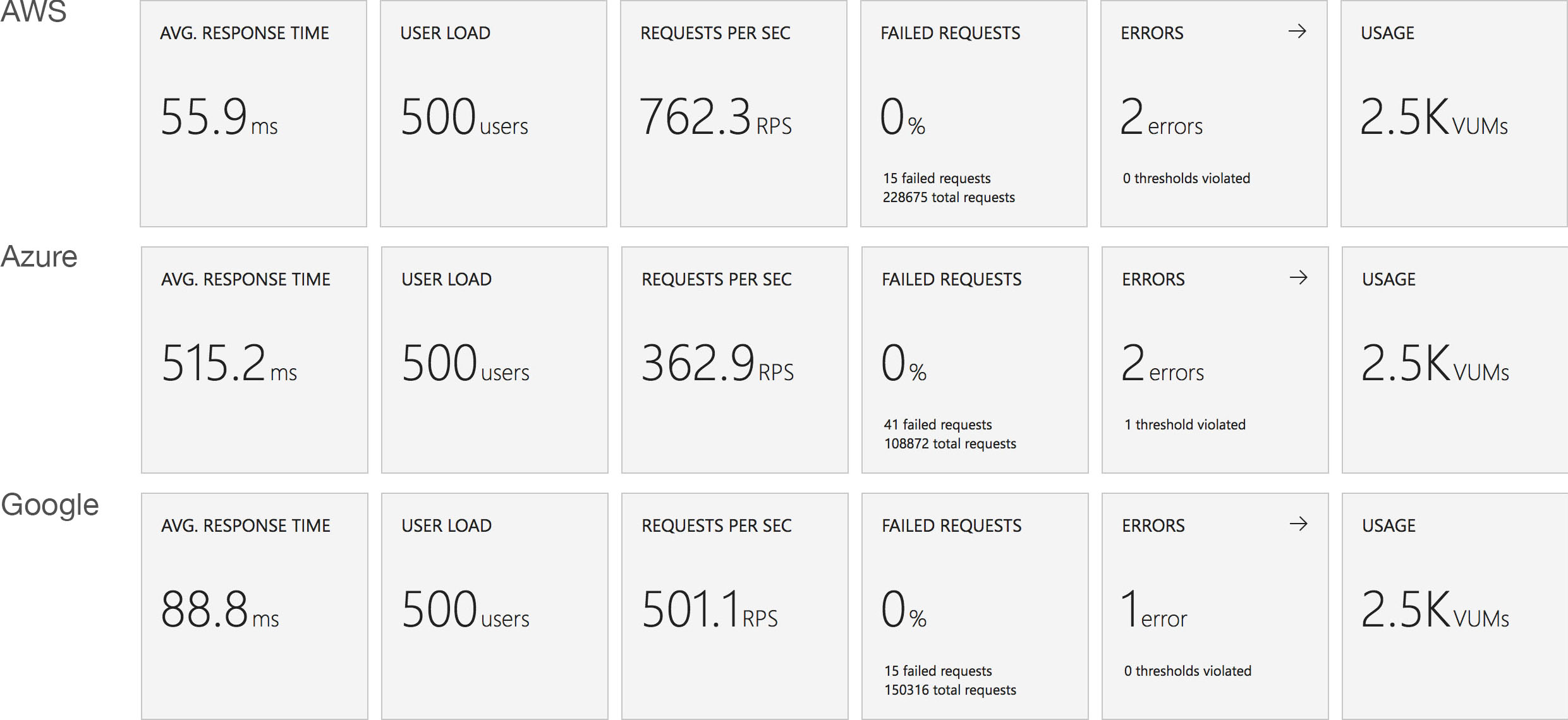

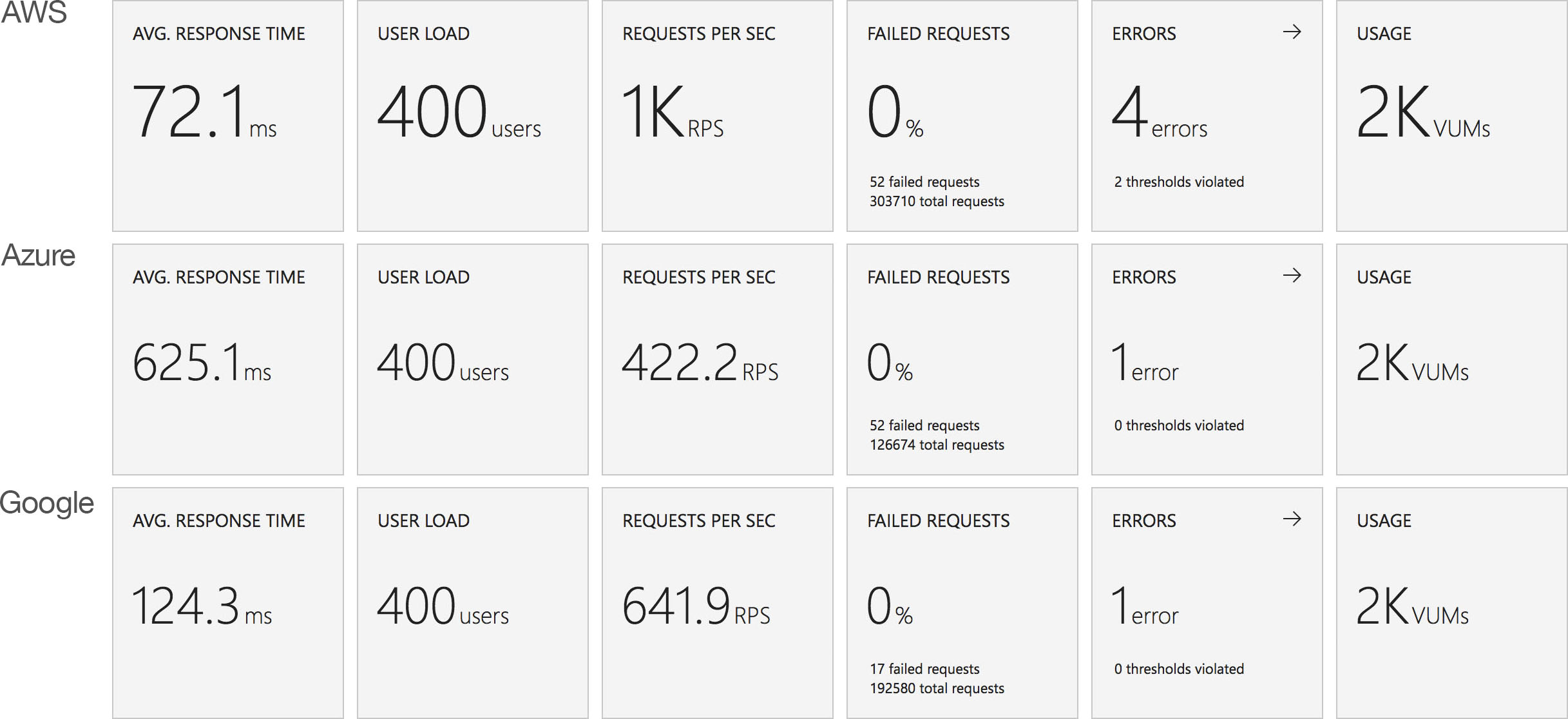

Stock Functions

This test uses the stock “return a string” function provided by each platform (I’ve captured the code in GitHub for reference) with the immediate high demand scenario: 400 concurrent users for 5 minutes.

With the functions essentially doing no work and no IO the response times are, as you would expect, smaller across the board but the scaling patterns are essentially unchanged from the workload function under the same load. AWS and Google respond quickly while Azure ramps up more slowly over time.

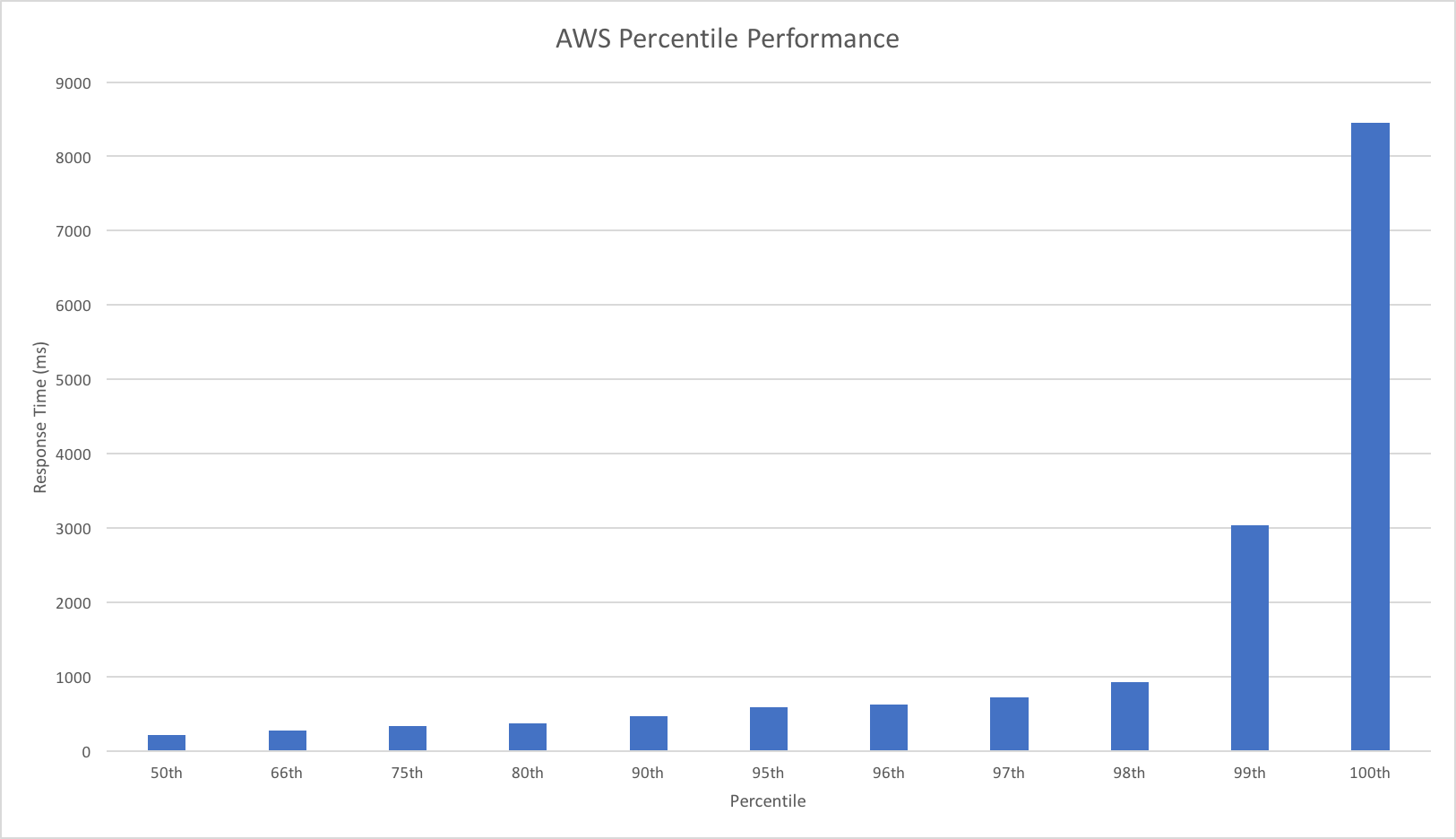

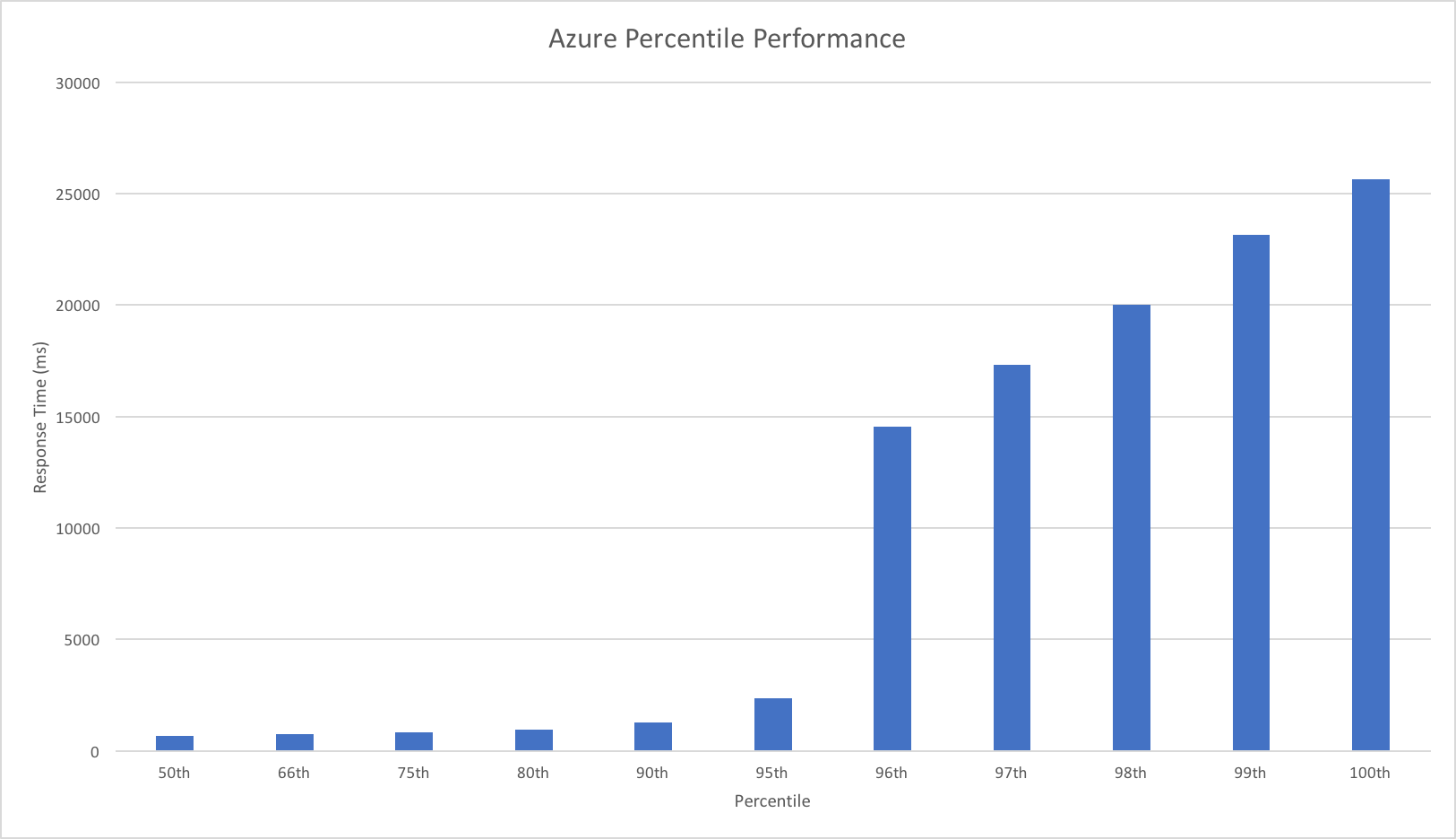

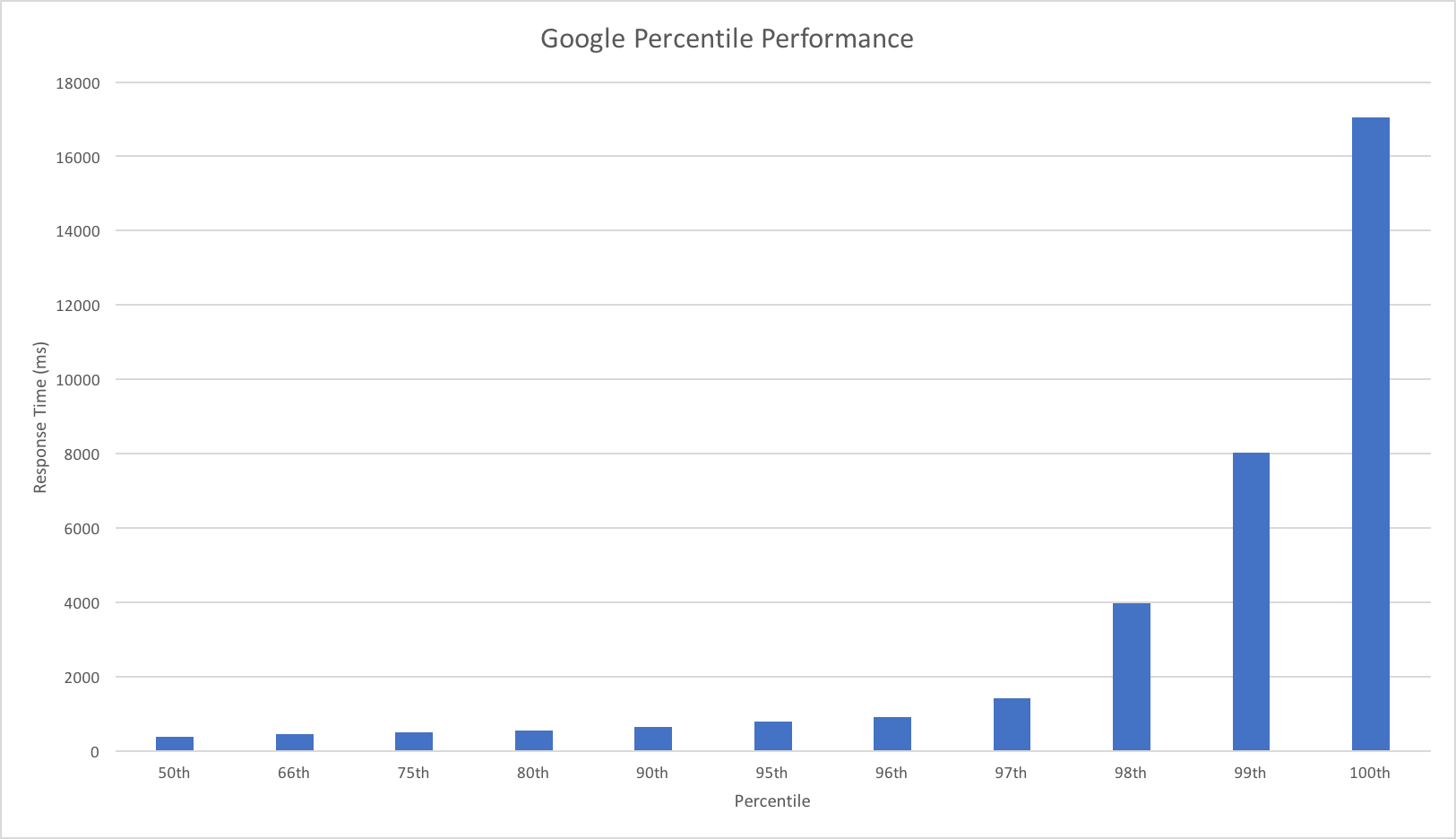

Percentile Performance

I was unable to obtain this data from VSTS and so resorted to running Apache Benchmarker. For this test I used settings of 100 concurrent requests for a total of 10000 requests, collected the raw data, and processed it in Excel. It should be noted that the network conditions were less predictable for these tests and I wasn’t always as geographically close to the cloud function as I was in other tests though repeated runs yielded similar patterns:

AWS maintains a pretty steady response time up to and including the 98th percentile but then shows marked dips in performance in the 99th and 100th percentiles with a worst case of around 8.5 seconds.

Google dips in performance after the 97th percentile with it’s 99th percentile roughly equivalent to AWSs 100th percentile and it’s own 100th percentile being twice as slow.

Azure exhibits a significant dip in performance at the 96th percentile with a sudden drop in response time from a not great 2.5 seconds to 14.5 seconds – in AWSs 100th percentile territory. Beyond the 96th percentile their is a fairly steady decrease in performance of around 2.5 seconds per percentile.

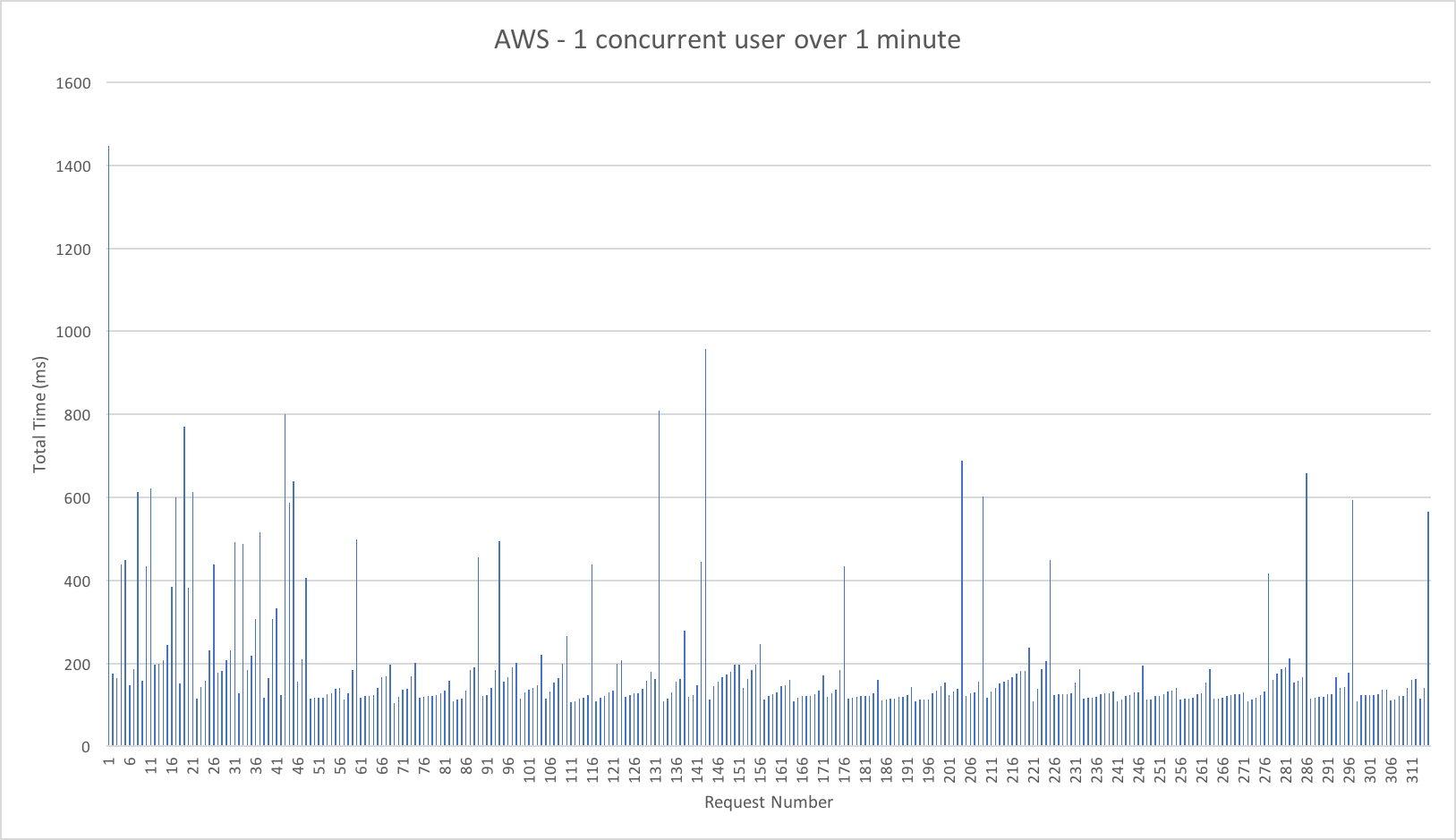

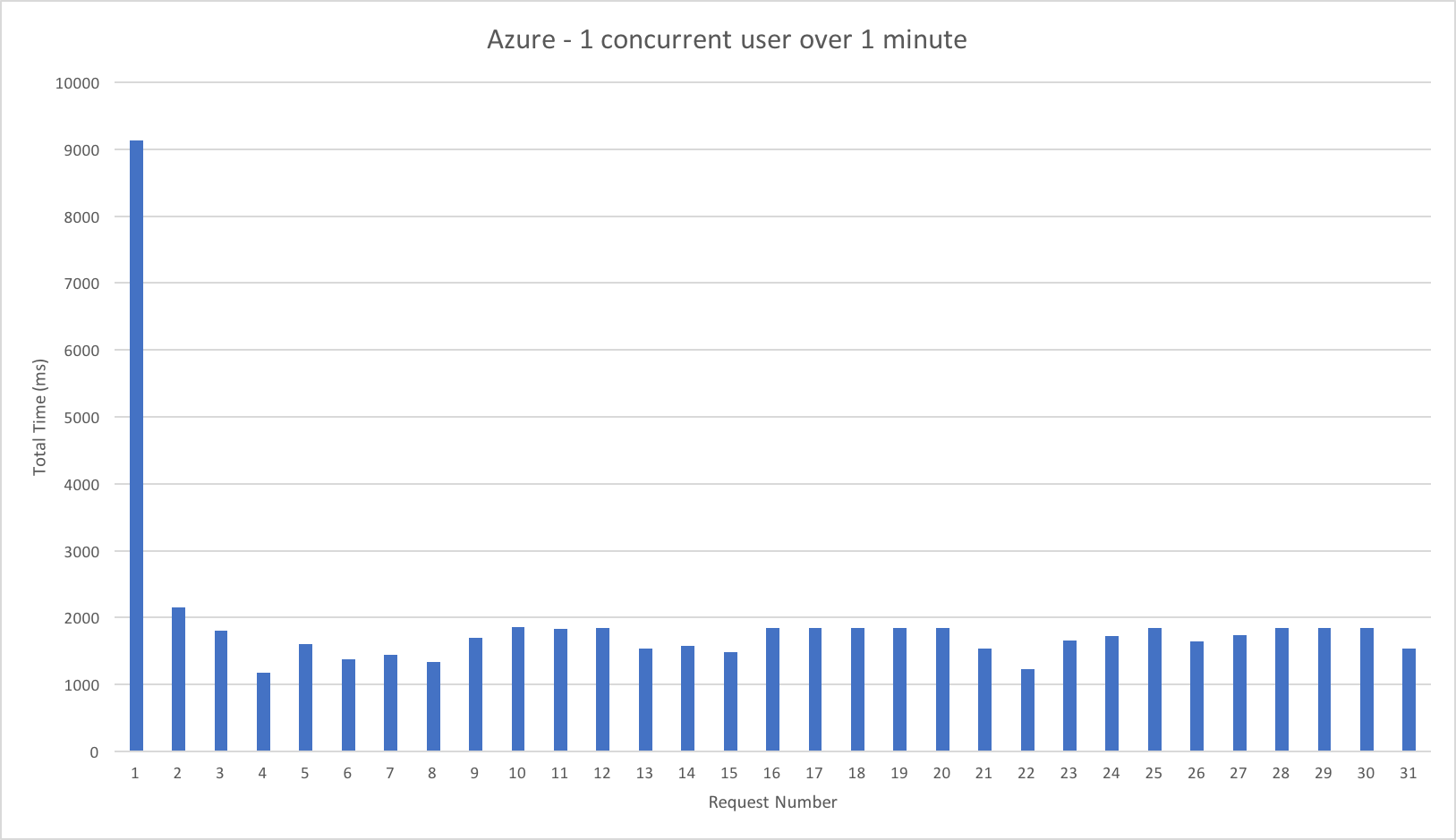

Cold Starts

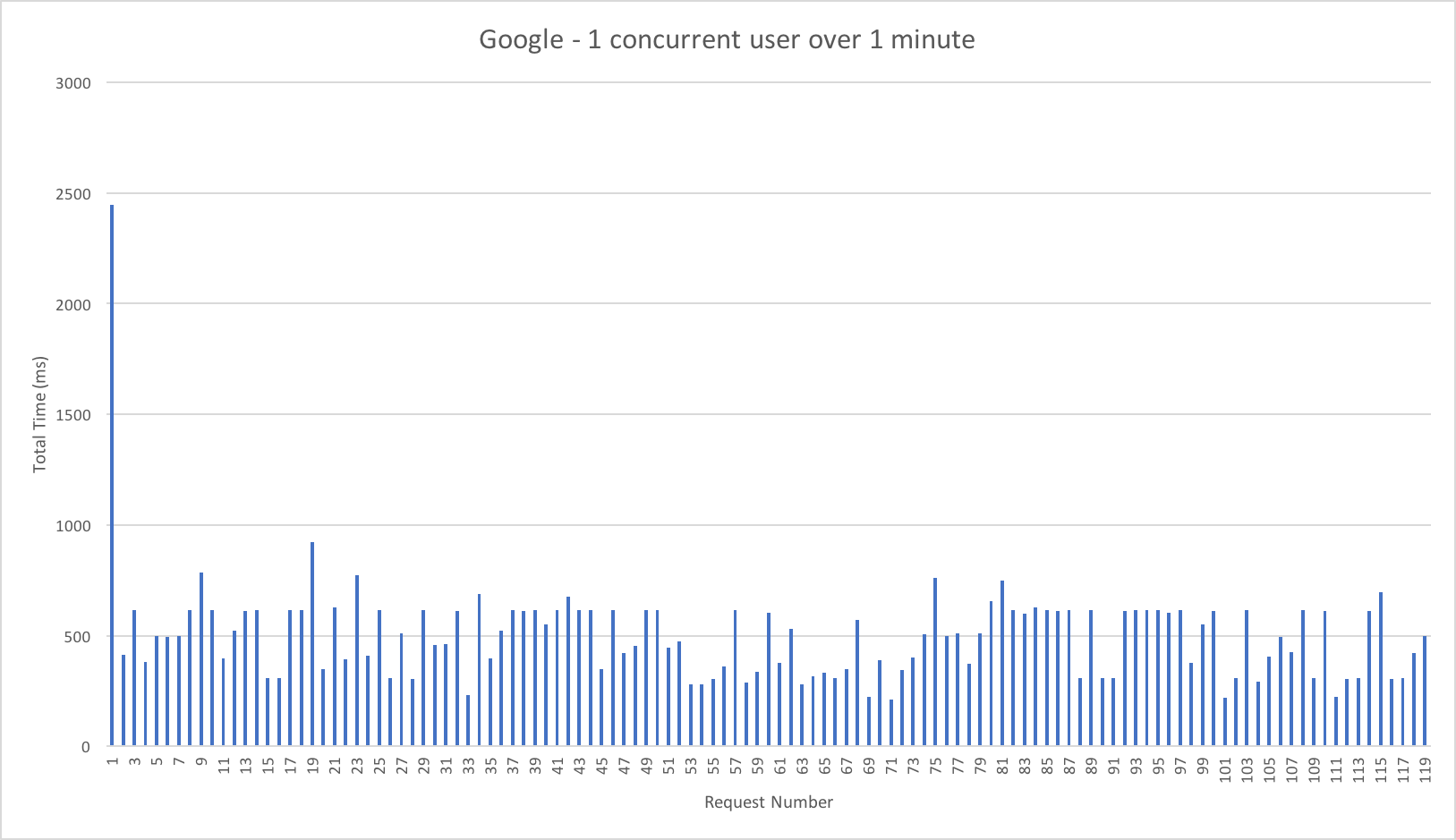

All the vendors solutions go “cold” after a time leading to a delay when they start. To get a sense for this I left each vendor idle overnight and then had 1 user make repeat requests for 1 minute to illustrate the cold start time but also get a visual sense of request rate and variance in response time:

Again we have some quite striking results. AWS has the lowest cold start time of around 1.5 seconds, Google is next at 2.5 seconds and Azure again the worst performer at 9 seconds. All three systems then settle into a fairly consistent response time but it’s striking in these graphs how AWS Lambda’s significantly better performance translates into nearly 3x as many requests as Google and 10x more requests than Azure over the minute.

It’s worth noting that the cold start time for the stock functions is almost exactly the same as for my main test case – the startup is function related and not connected to storage IO.

Conclusions

AWS Lambda is the clear leader for HTTP triggered functions – on all the runtimes I’ve tried it has the lowest response times and, at least within the volumes tested, the best ability to deal with scale and the most consistent performance. Google Cloud Functions are not far behind and it will be interesting to see if they can close the gap with optimisation work over the coming year – if they can get their flat our response times reduced they will probably pull level with AWS. The results are similar enough in their characteristics that my suspicion is Google and AWS have similar underlying approaches.

Unfortunately, like with the .NET scenarios, Azure is poor at handling HTTP triggered functions with very similar patterns on show. The Azure issues are not framework based but due to how they are hosting functions and handling scale. Hopefully over the next few months we’ll see some improvements that make Azure a more viable host for HTTP serverless / API approaches when latency matters.

By all means use the above as a rough guide but ultimately whatever platform you choose I’d encourage you to build out the smallest representative vertical slice of functionality you can and test it.

Thanks for reading – hopefully this data is useful.

Recent Comments