If you're looking for help with C#, .NET, Azure, Architecture, or would simply value an independent opinion then please get in touch here or over on Twitter.

Having conducted my ARM and x64 tests on AWS yesterday I was curious to see how Azure would fair – it doesn’t support ARM but ultimately that’s a mechanism for delivering value (performance and price) and not an end in and of itself. And so this evening I set about replicating the tests on Azure.

In the end I’ve massively limited my scope to two instance sizes:

- A2 – this has 2 CPUs and 4Gb of RAM (much more RAM than yesterdays) and costs $0.120 per hour

- B1S – a burstable VM that has 1 CPUand 1Gb RAM (so most similar to yesterdays t2.micro) and costs $0.0124 per hour

Note – I’ve begun to conduct tests on D series too, preliminary findings is that the D1 is similar to the A2 in performance characteristics.

I was struggling to find Azure VMs with the same pricing as AWS and so had to start with a burstable VM to get something in the same kind of ballpark. Not ideal but they are the chips you are dealt on Azure! I started with the B1S which was still more expensive than the ARM VM. I created the VM, installed software, and ran the tests – the machine comes with 30 credits for bursting. However after running tests several times it was still performing consistently so these were either exhausted quickly, made little difference, or were used consistently.

I moved to the A2_V2 because, frankly, the performance was dreadful on my early tests with the B1S and I also wanted something that wouldn’t burst. I was also trying to match the spec of the AWS machines – 2 cores and 1Gb of RAM. I’ll attempt the same tests with a D series when I can.

Test setup was the same and all tests are run on VMs accessed directly on their public IP using Apache as a reverse proxy to Kestrel and our .NET application.

I’ve left the t2.micro instance out of this analysis

Mandelbrot

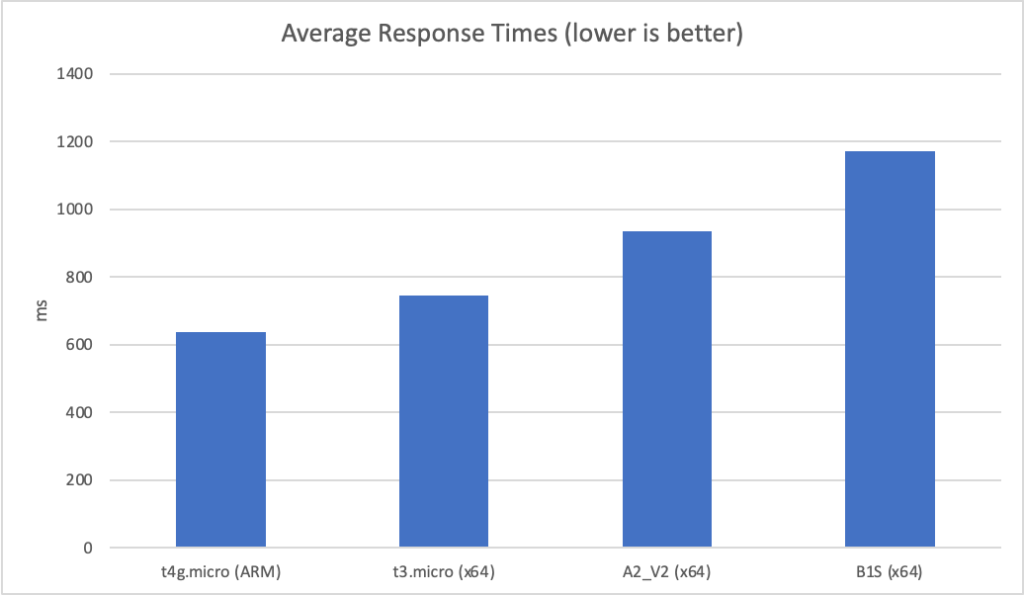

With 2 clients per test we see the following response times:

We can see that the two Azure instances are already off to a bad start on this computationally heavy test.

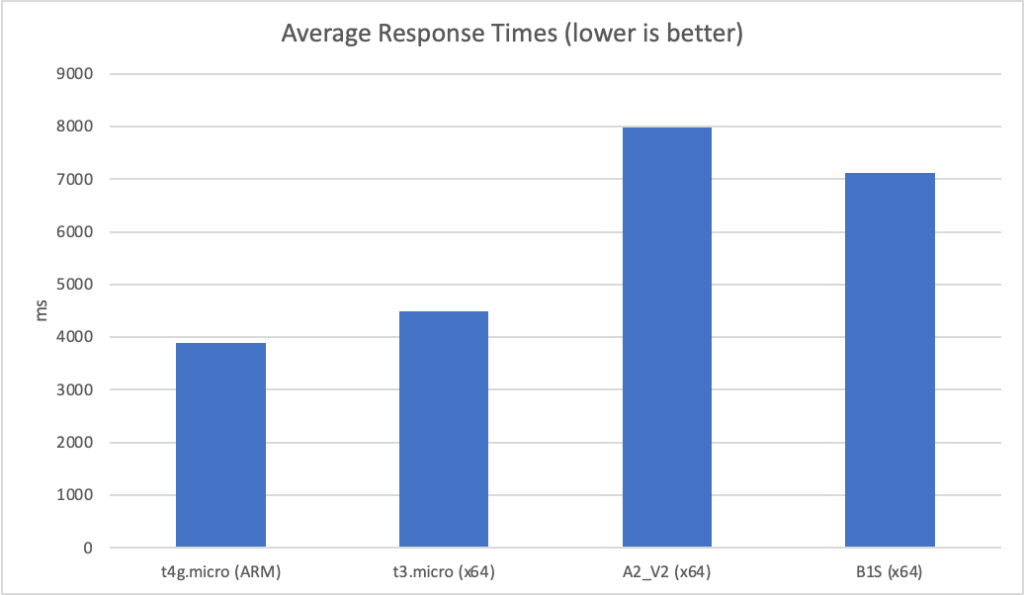

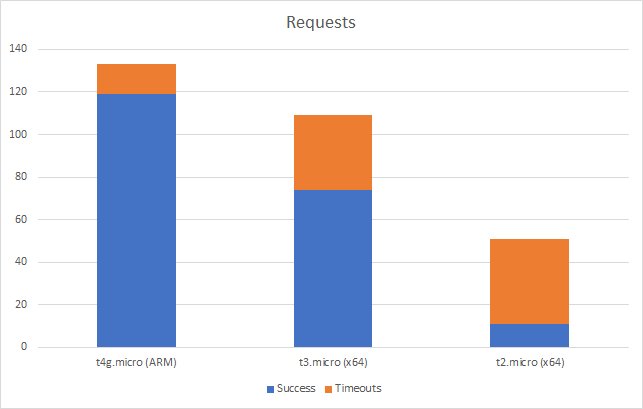

At 10 clients per second we continue to see this reflected:

However at this point the two Azure instances begin to experience timeout failures (the threshold being set at 10 seconds in the load tester):

The A2_V2 instance is faring particularly badly particularly given it is 10x the cost of the AWS instances.

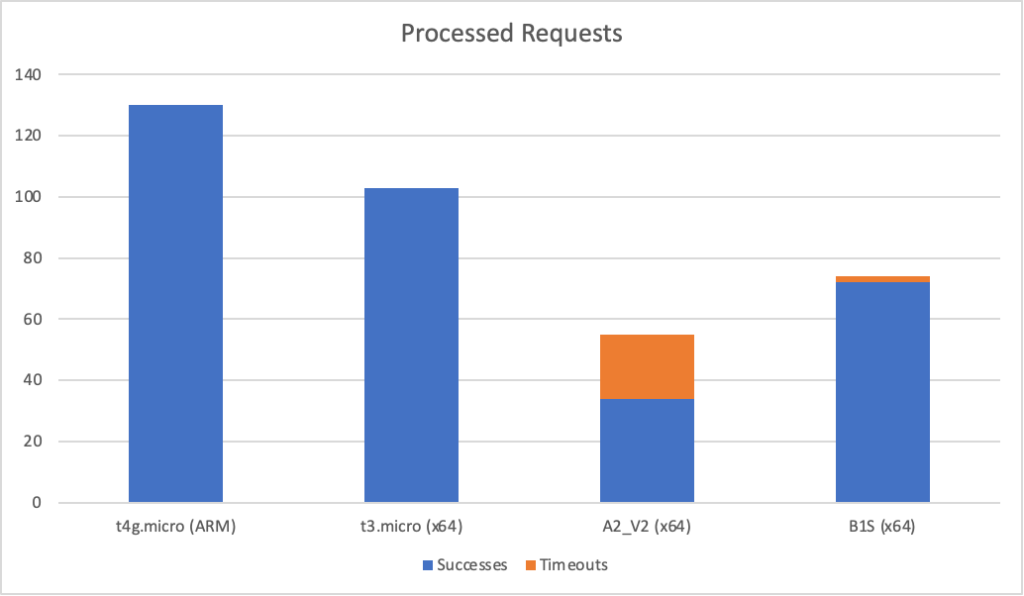

Unfortunately their is no meaningful compaison I can make under higher load as both Azure instances collapse when I push to 15 clients per second. For complete sake here are the results on AWS at 20 clients per second (average response and total requests):

Simulated Async Workload

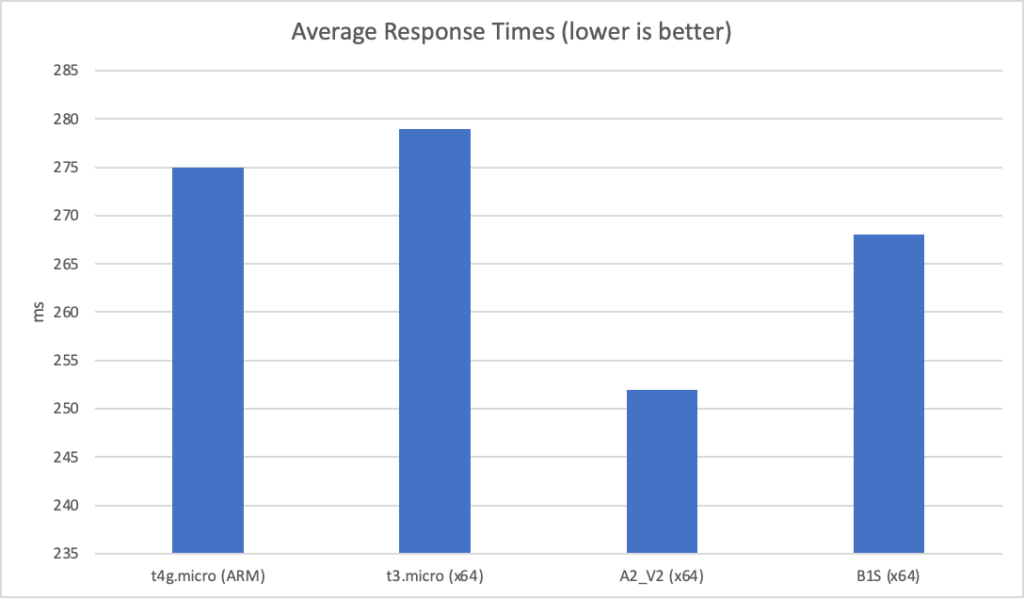

With our simulated async workload Azure fares better at low scale. Here are the results at 20 requests per second:

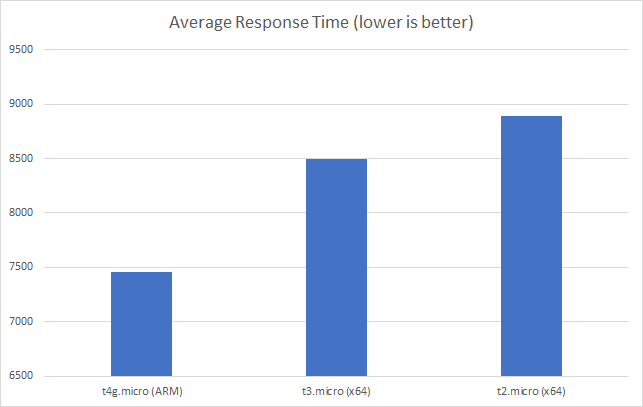

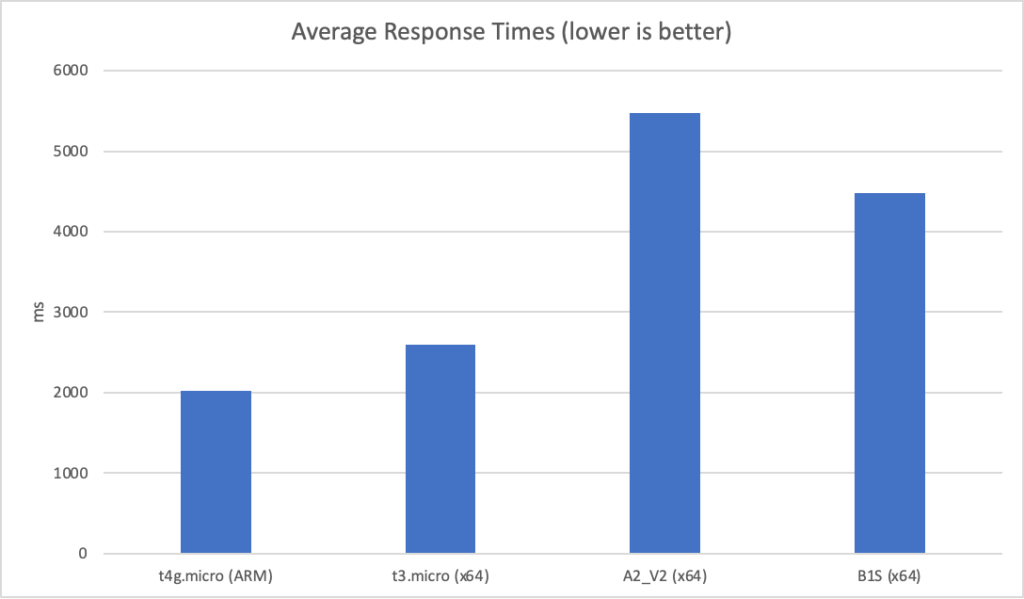

As we push the scale up things get interesting with different patterns across the two vendors. Here are the average response times at 200 clients per second:

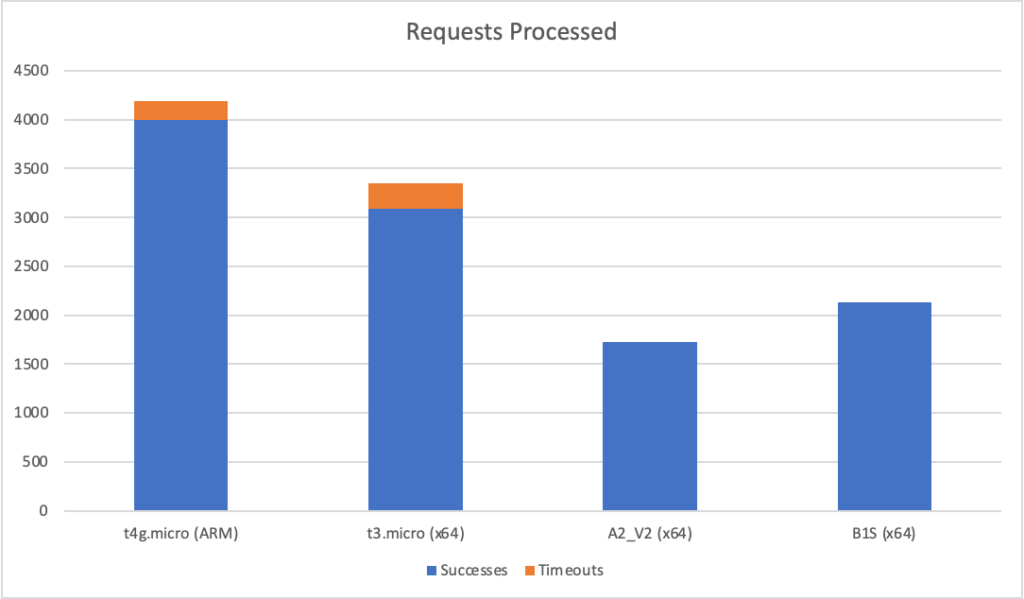

At first glance AWS looks to be running away with things however both the t4g.micro and t3.micro suffer from performance degradation at the extremes – the max response time is 17 seconds for both while for the Azure instances it is around 9 seconds.

You can see this reflected in the success and total counts where the AWS instances see a number of timeout failures (> 10 seconds) while the Azure instances stay more consistent:

However the AWS instances have completed many more requests overall. I’ve not done a percentile breakdown (see comments yesterday) but it seems likely that at the edges AWS is fraying and degrading more severely than Azure leading to this pattern.

Conclusions

The different VMs clearly have different strengths and weaknesses however in the computational test the Azure results are disappointing – the VMs are more expensive yet, at best, offer performance with different characteristics (more consistent when pushed but lower average performance – pick your poison) and at worst offer much lower performance and far less value for money. They seem to struggle with computational load and nosedive rapdily when pushed in that scenario.

Full Results

| Test | Vendor | Instance | Clients per second | Min | Max | Average | Successful Responses | Timeouts |

| Mandelbrot | AWS | t4g.micro (ARM) | 2 | 618 | 751 | 638 | 60 | 0 |

| Mandelbrot | AWS | t4g.micro (ARM) | 5 | 765 | 2794 | 1709 | 132 | 0 |

| Mandelbrot | AWS | t4g.micro (ARM) | 10 | 761 | 6958 | 3882 | 130 | 0 |

| Mandelbrot | AWS | t4g.micro (ARM) | 15 | 759 | 10203 | 5704 | 127 | 1 |

| Mandelbrot | AWS | t4g.micro (ARM) | 20 | 802 | 10207 | 7459 | 119 | 14 |

| Mandelbrot | AWS | t3.micro (x64) | 2 | 701 | 885 | 744 | 60 | 0 |

| Mandelbrot | AWS | t3.micro (x64) | 5 | 878 | 3313 | 2069 | 108 | 0 |

| Mandelbrot | AWS | t3.micro (x64) | 10 | 855 | 8037 | 4498 | 103 | 0 |

| Mandelbrot | AWS | t3.micro (x64) | 15 | 973 | 10202 | 6930 | 84 | 9 |

| Mandelbrot | AWS | t3.micro (x64) | 20 | 1030 | 10215 | 8495 | 74 | 35 |

| Mandelbrot | AWS | t2.micro (x64) | 2 | 675 | 1140 | 1010 | 58 | 0 |

| Mandelbrot | AWS | t2.micro (x64) | 5 | 651 | 5324 | 3332 | 72 | 0 |

| Mandelbrot | AWS | t2.micro (x64) | 10 | 1867 | 10193 | 6999 | 56 | 8 |

| Mandelbrot | AWS | t2.micro (x64) | 15 | 1445 | 10203 | 9458 | 32 | 44 |

| Mandelbrot | AWS | t2.micro (x64) | 20 | 1486 | 10206 | 8895 | 11 | 40 |

| Mandelbrot | Azure | A2_V2 (x64) | 2 | 917 | 927 | 934 | 60 | 0 |

| Mandelbrot | Azure | A2_V2 (x64) | 5 | 1263 | 6649 | 3975 | 56 | 0 |

| Mandelbrot | Azure | A2_V2 (x64) | 10 | 1205 | 10203 | 7985 | 34 | 21 |

| Mandelbrot | Azure | A2_V2 (x64) | 15 | ERROR RATE TOO HIGH | ||||

| Mandelbrot | Azure | A2_V2 (x64) | 20 | ERROR RATE TOO HIGH | ||||

| Mandelbrot | Azure | B1S (x64) | 2 | 670 | 2551 | 1171 | 57 | 0 |

| Mandelbrot | Azure | B1S (x64) | 5 | 1612 | 5521 | 3252 | 72 | 0 |

| Mandelbrot | Azure | B1S (x64) | 10 | 1259 | 10001 | 7115 | 72 | 2 |

| Mandelbrot | Azure | B1S (x64) | 15 | ERROR RATE TOO HIGH | ||||

| Mandelbrot | Azure | B1S (x64) | 20 | ERROR RATE TOO HIGH | ||||

| Async | AWS | t4g.micro (ARM) | 20 | 236 | 371 | 275 | 600 | 0 |

| Async | AWS | t4g.micro (ARM) | 50 | 222 | 4178 | 373 | 1498 | 0 |

| Async | AWS | t4g.micro (ARM) | 100 | 231 | 414 | 286 | 2994 | 0 |

| Async | AWS | t4g.micro (ARM) | 200 | 310 | 17388 | 2028 | 3995 | 200 |

| Async | AWS | t3.micro (x64) | 20 | 233 | 402 | 279 | 600 | 0 |

| Async | AWS | t3.micro (x64) | 50 | 235 | 4912 | 407 | 1498 | 0 |

| Async | AWS | t3.micro (x64) | 100 | 235 | 545 | 292 | 2994 | 0 |

| Async | AWS | t3.micro (x64) | 200 | 234 | 17376 | 2598 | 3089 | 260 |

| Async | AWS | t2.micro (x64) | 20 | 242 | 412 | 298 | 600 | 0 |

| Async | AWS | t2.micro (x64) | 50 | 241 | 545 | 312 | 1497 | 0 |

| Async | AWS | t2.micro (x64) | 100 | 244 | 9829 | 2260 | 1989 | 0 |

| Async | AWS | t2.micro (x64) | 200 | 347 | 17375 | 3858 | 2118 | 252 |

| Async | Azure | A2_V2 (x64) | 20 | 173 | 343 | 252 | 600 | 0 |

| Async | Azure | A2_V2 (x64) | 50 | 196 | 504 | 274 | 1498 | 0 |

| Async | Azure | A2_V2 (x64) | 100 | 239 | 4240 | 2484 | 1794 | 0 |

| Async | Azure | A2_V2 (x64) | 200 | 423 | 8929 | 5475 | 1725 | 0 |

| Async | Azure | B1S (x64) | 20 | 206 | 383 | 268 | 580 | 0 |

| Async | Azure | B1S (x64) | 50 | 209 | 436 | 278 | 1498 | 0 |

| Async | Azure | B1S (x64) | 100 | 292 | 3151 | 1892 | 2252 | 0 |

| Async | Azure | B1S (x64) | 200 | 482 | 7708 | 4474 | 2136 | 0 |

Recent Comments