If you're looking for help with C#, .NET, Azure, Architecture, or would simply value an independent opinion then please get in touch here or over on Twitter.

(I’m happy to engage with anyone about MS / Azure about this but I don’t think their is any new feedback here sadly – its a “more of the same” thing)

This last week I needed to deploy a greenfield system to Azure for the first time in a good while and so it seemed like a good point to reflect on the state of Azure deployment. tl;dr – it was like pulling teeth.

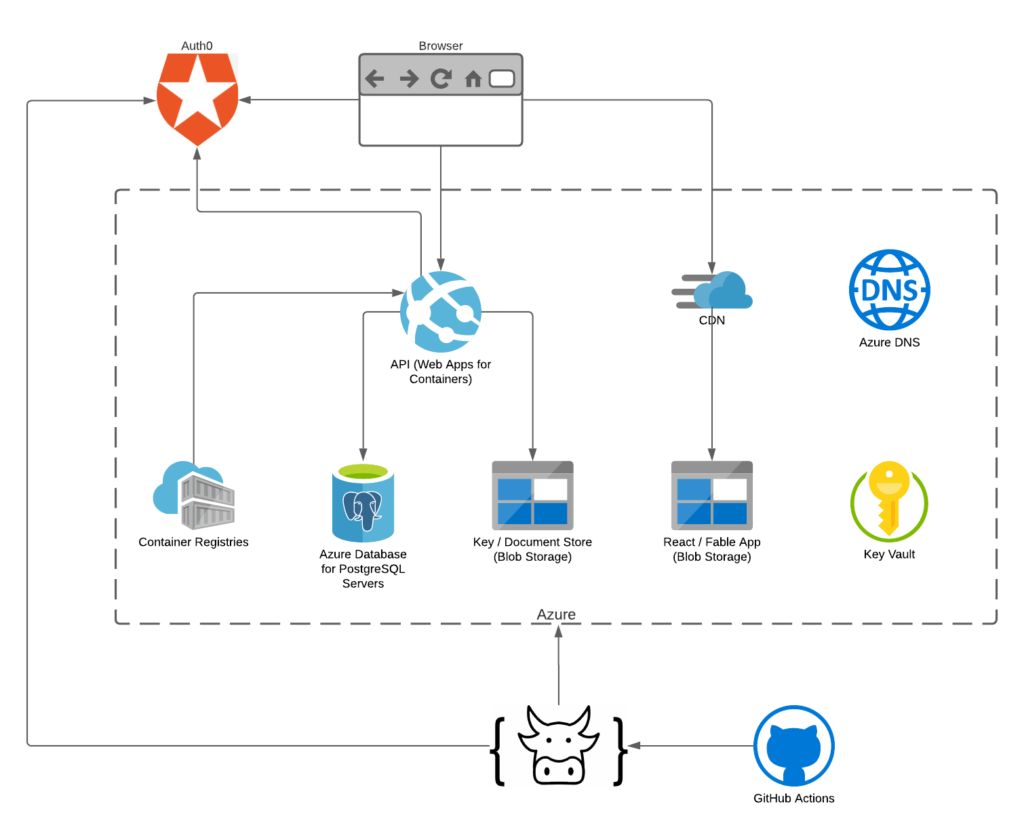

The simple is fairly typical – it uses a variety of Azure components to allow users to access a React (Fable) based website that talks to APIs (.NET 5) and a PostgreSQL database and a simple blob storage based key/document store to allow users to manage risk and capabilities in their organisations.

As its greenfield I’ve gone all in on managed identity and Key Vault wherever possible. I use Docker to run the API and within Web Apps for Containers and I use the Azure CDN backed by a storage account to serve the React app.

Build, release and deployment occurs via a GitHub Action with the real work taking place inside Farmer and so ultimately the deployment is based on ARM.

The system is written end to end in F# and the architecture is shown below.

Just a couple of notes on this architecture based on common questions.

- Why not use AKS? For something this simple? Not needed – massive overkill.

- Why use Docker then? I value being able to move things between vendors. For example I’ve experimented with this deployment in AWS, Azure and Digital Ocean. Using fairly standardised packaging mechanisms means I’m generally just worrying about configuration.

- Why not use use Azure Static Web Apps. Somehow this is still in preview and I already knew how to do the same thing with the CDN and had to do a “manual” build anyway as I am using Fable (which isn’t hard – you can find some notes about that here).

- I thought you loved Service Bus where’s the Service Bus? v1.1 🙂

The Good

- Farmer is excellent – I’m a big fan of using actual code for infrastructure and see no reason at all to learn another (stunted) language like Bicep to do so. Pages of ARM become just a handful of lines of Farmer code and as it ultimately outputs and (optionally) executes ARM templates you’re not locked into anything. My final build and release script is entirely within F# – its not a Frankensteins monster of JSON, Bash, Powershell etc. though I do call out to az cli on occasion to work round problems in ARM.

At this point I’ve used ARM itself, Farmer, Pulumi, Terraform and played with Bicep and my favourite of these is definitely Farmer. It saves time and reduces cognitive load. - Managed Identity now feels pretty usable within Azure. Last time I tried this support was so patchy it just wasn’t worth the effort. This time round although not everywhere it is in many places, is fairly well documented, and supports local development easily – again last time I experimented with it local development (at least on a Mac at the time) was somewhat painful.

- The Azure Portal is useful when you’re dealing with multiple services. Its got its issues for sure but it does bring things together in a helpful way.

- Log Streaming for Web Apps is great – you can flick to that tab and quickly see application related issues around startup within your containers.

The Bad

- Error reporting from the Azure Resource Manager itself is still dreadful. A times I was faced by utterly opaque errors and at others completely misleading errors. I’m 95% sure that if I cracked the lid on the underlying code I would find things like “try …. catch all… log generic error”.

Additionally what are basically validation errors “you can’t do this with that” are left to the various resources to handle. The problem with this is that this won’t occur until a long way into development and means the feedback loop for development is torturously slow.

You can burn days on this stuff. And I understand the need to decouple resource deployment from the orchestration of it but its not hard to see how validation couldn’t also be decoupled and done earlier stage for many errors. - Read the small print! Things in Azure are in a constant state of moving forward – and this is great for the most part – but when those systems are foundational things you find they are partially supported and that their are caveats. And you can still get surprised by things if you are a new Azure user: for example Functions not supporting .NET 5.

- Things are declarative until they are not! The defence of ARM, and now Bicep, is that its declarative. The problem is it really isn’t – it requires orchestrating and some of this is in areas that really need smoothing out.

A great example of this is deployment from ACR to Web Apps for Containers. The Web App for Container won’t deploy with CI/CD turned on until ACR is both created and has an image inside it. This immediately means I have to split my deployment into two ARM templates and orchestrate between the two. This is such a common use case it really ought to be smoothed out – let the Web App be created but stay empty until a ACR is created and a container pushed. If I need to restart the web app ok but don’t prevent me creating it. - Bugs. Even in this simple deployment I’ve come across a few of these. Just a couple of examples below but the real issue is that when you combine this with the above issues you end up with very confusing situations.

- Granting my web app managed identity access to blob storage fails on multiple runs on the ARM template. It will work when the account is created but fail on subsequent deployments. Workaround: do it using Az CLI.

- For reasons unknown KeyVault references are not working on the Web App. Workaround: don’t use them, use the same managed identity to load them into the ASP.Net Core app configuration with AddKeyVault(). I’m hoping this is something that can be resolved but I’ve tried all sorts of things with no luck yet.

Closing Thoughts

Deploying to Azure is still a painful and unpredictable task for developers. It shouldn’t be. As a point of comparison this took me two days to complete for dev and live environments (the latter took about an hour) – I deployed a very similar system into AWS a couple of months back knowing absolutely nothing about AWS and it also took two days. Given I’ve been working with Azure for 10+ years that’s really disappointing.

I think one of the reasons its such a muddle is that Azure has been built “top down” rather than bottom up. The underlying compute, network and identity platform have been patched in under the original rather humble set of PaaS services. AWS on the other hand feels like it was built bottom up and so while it can feel lower level than Azure it also feels more consistent and predictable.

Why don’t these time burning and infuriating issues get attention? I think their are two things at play.

Firstly – Conway’s Law. Microsoft is huge and you can see the walls between the teams and divisions bleeding into common areas like this and their doesn’t seem to be a guiding set of minimum standards for Azure teams. And if they are they are clearly high up on the list of things to be sacrificed when things are running late.

Secondly – these things aren’t sexy. They can’t be launched at a huge PR event. They don’t result in a 5 minute demo. They don’t sell to CTOs of large big spending businesses who are a big distance from this stuff and the cost of these issues is often hidden – its buried in the minutiae.

Thirdly – Microsoft is at war with Eurasia and its always been at war with Eurasia. Its hard to announce you’re working on fixing something that is really sub-standard without admitting you have something really sub-standard. And so due to the marketing things are awesome until they can be replaced by a new feature that can be celebrated. Take ARM as an example – celebrated and championed, despite complaints of real world users, right up until Bicep when it became IL (though ironically it seems all the same hacks are still needed).

As ever I’m sure the Product teams are well meaning but like the rest of us they are wrapped in management, commercial concerns, and organisational systems that result in sub-optimal outcomes for users. Sadly we, as users of Azure, pay for this every day and the deployment area of Azure continues to feel at the sharp and expensive end of this.

Recent Comments